Optimising human-robot collaborative teleoperation using adaptive fuzzy logic control and real-time motion intention estimation

Abstract

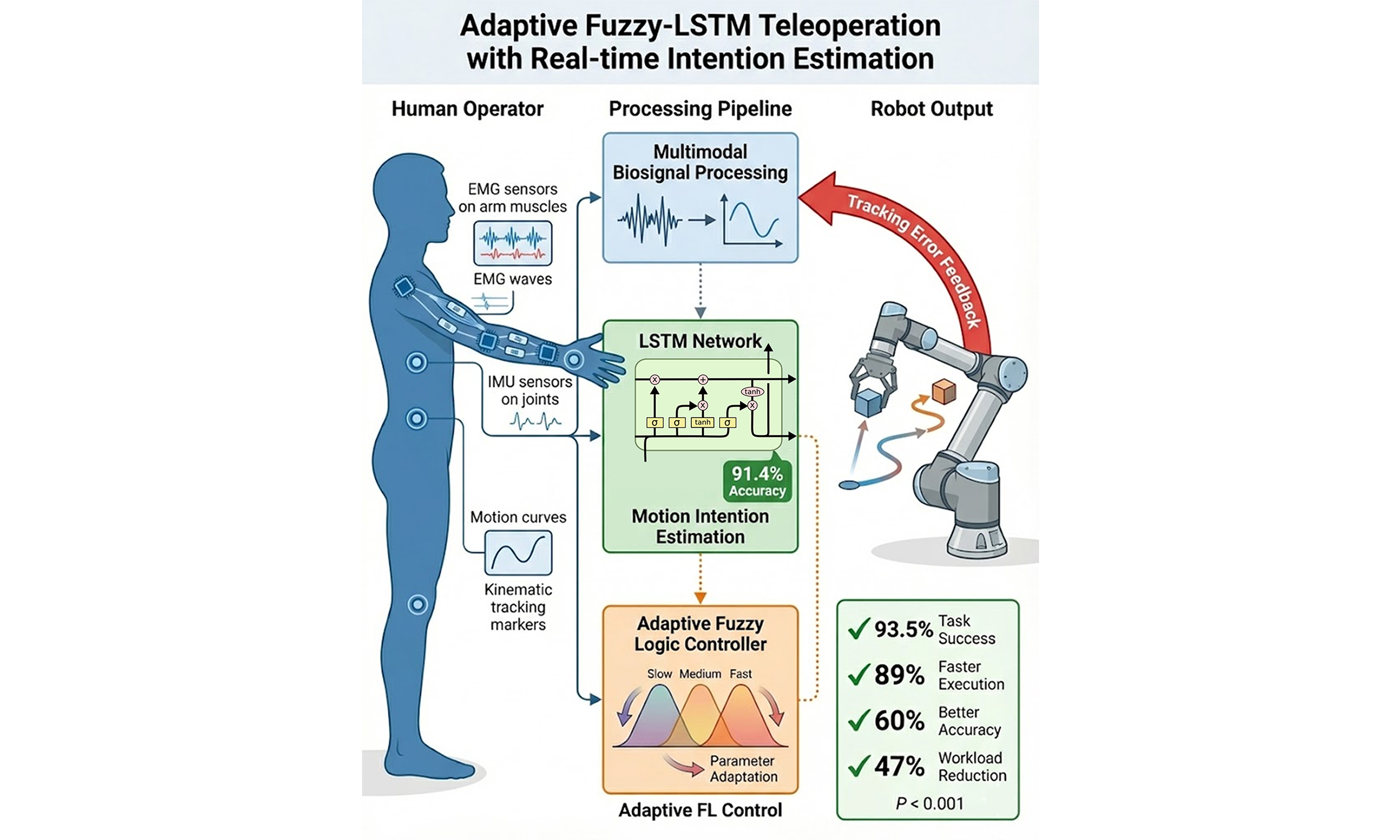

Human-robot collaborative (HRC) teleoperation requires seamless integration of intention understanding and adaptive control to achieve natural, efficient, and reliable remote manipulation. Existing tele-operation models (TOMs) suffer from limited intention-prediction capabilities, static control parameters, and inadequate adaptation to dynamic operational conditions, resulting in reduced task performance and increased cognitive burden for the operator. The proposed TOM that combines real-time motion intention estimation using long short-term memory (LSTM) + adaptive fuzzy logic control to enhance human-robot collaboration. The proposed TOM leverages multimodal bio-signals, including electromyography, inertial measurement units, and joint kinematics, to decode operator intentions via temporal feature extraction and sequential classification. The LSTM-based classifier processes normalised feature vectors to predict discrete motion intentions with 91.4% accuracy across varying task complexities. Experimental validation using a 6-degree-of-freedom collaborative manipulator and 12 human participants demonstrates significant performance improvements over traditional TOM. The integrated system achieved a 93.5% task-completion success rate, 89% faster execution times, a 60% improvement in placement accuracy, and a 47% reduction in operator mental workload across low, moderate, and high-complexity manipulation tasks. Statistical analysis confirms highly significant improvements (P < 0.001) with large effect sizes across all performance metrics. The proposed model addresses fundamental limitations in HRC teleoperation by providing temporally-aware intention recognition and context-sensitive adaptive control, enabling more natural and efficient collaborative manipulation in remote and hazardous environments.

Keywords

1. INTRODUCTION

The development of robotic models has primarily transformed human skills in environments where direct human presence is hazardous, impractical, or impossible. Teleoperation enables skilled operators to perform complex manipulation tasks remotely, extending human dexterity and cognitive abilities to applications ranging from surgical trials and space exploration to nuclear facility maintenance and deep-sea operations[1]. However, the use of tele-operation models (TOM) remains constrained by the critical challenge of establishing intuitive, responsive, and stable communication channels between human operators and robotic model operating under uncertain conditions with inherent time delays[2].

Contemporary TOMs typically rely on direct input devices such as joysticks, haptic interfaces, or motion intention estimation (MIE) that translate operator commands into robot actions via predetermined mapping functions[3]. These methods suffer from several critical limitations that impede natural human-robot collaboration (HRC). First, conventional models lack predictive capabilities to anticipate operator intentions, resulting in reactive rather than proactive control behaviour that introduces noticeable delays between the formation of intentions and the robot’s response[4]. Second, static control parameters fail to adapt to variable task requirements, environmental conditions, and individual operator features, leading to suboptimal performance across diverse operational scenarios[5]. Third, the absence of sophisticated mechanisms for understanding intention forces operators to execute overly explicit commands, increasing cognitive workload and reducing task execution efficiency[6].

The complexity of human motor control and intention formation poses significant challenges for accurately interpreting these processes in real time. Human movement generation involves hierarchical planning processes that begin with the formation of high-level goals, proceed through trajectory planning, and culminate in the execution of detailed motor commands[7]. Traditional TOMs primarily capture the final motor output while ignoring the rich temporal dynamics and physiological correlates that encode operator intentions at earlier stages of the motor planning hierarchy[8]. This limitation results in delayed recognition of intentions, increased operator effort, and reduced system responsiveness, particularly during dynamic tasks that require rapid intention transitions or precise manipulation.

Recent advances in machine learning (ML), particularly in sequential data processing and adaptive control theory, provide promising foundations for addressing these fundamental limitations. Long short-term memory (LSTM) neural networks (NNs) have demonstrated exceptional capabilities for modelling temporal dependencies in sequential data, making them suitable for decoding the temporal patterns inherent in human bio-signals and movement trajectories[9]. Simultaneously, adaptive fuzzy logic (FL) control systems propose mathematically rigorous models for handling uncertainty, nonlinearity, and time-varying parameters, while maintaining interpretability and stability guarantees essential for safety-critical applications[10].

Although LSTM and FL control are individually well known in teleoperation, their real-time integration in a TOM introduces challenges not fully addressed in existing work. In particular, prior methods do not properly handle (a) temporal coherence issues, where inference delay creates noticeable latency between operator intent and robot response; (b) stability guarantees when control parameters adapt under time-varying and imperfect perception; and (c) bidirectional coupling between perception and control, as most systems still treat them as sequential blocks. Solving these problems requires more than a direct combination of existing models.

Integrating intention prediction with adaptive control has strong potential to improve teleoperation by enabling systems that can predict and adapt to human behaviour in real time. This can reduce operator workload, improve task execution, and support smoother collaboration. However, it also raises challenges related to real-time processing, multimodal signal fusion, temporal alignment, and stability under strict latency constraints. This work addresses these gaps by developing an intelligent teleoperation system (ITS) that combines real-time MIE with adaptive FL control. Multimodal bio-signals, including electromyography (EMG), inertial measurement units (IMUs), and joint kinematics, are used to capture temporal patterns of human motion. An LSTM predicts operator intent with low latency, while an adaptive FL controller converts these predictions into smooth and stable robot motion by updating control parameters online based on performance feedback.

The primary objectives of this work include:

(1) A bidirectional adaptation model enabling closed-loop feedback between LSTM intention estimation and FL control, where tracking errors dynamically modulate classification confidence and temporal smoothing rather than operating sequentially;

(2) A temporal alignment model that predictively compensates inference-execution delays through trajectory extrapolation, reducing intent-to-action lag by 55% while maintaining hard real-time constraints;

(3) Integrated Lyapunov-based stability guarantees for the complete model under time-varying classifications and online adaptation, extending beyond conventional methods that assume perfect perception inputs;

(4) Multimodal bio-signal processing achieving 91.4% intention accuracy with < 10 ms latency;

(5) Experimental validation with 12 participants demonstrating 93.5% task success, 89% faster execution, 60% improved accuracy, and 47% workload reduction (P < 0.001, large effect sizes).

HRC teleoperation has shifted from basic mechanistic control toward perception-based systems that can interpret operator intent and adjust robot behaviour during operation. However, most current systems still rely on joysticks, data gloves, or motion capture interfaces combined with fixed-gain controllers such as proportional-integral-derivative (PID), impedance, or kinematic mapping. Although wearable sensing methods such as EMG and IMU, along with learning-based intention models, have been studied, they are usually implemented as separate modules without closed-loop integration. As a result, existing teleoperation systems are mostly reactive, sensitive to latency, and show limited adaptability to operator variability and task uncertainty. Many intention recognition methods also depend on single-frame classification or shallow models, which fail to capture temporal motor patterns and reduce reliability in complex manipulation.

FL controllers are known to handle nonlinearity and uncertainty, but most designs use fixed parameters and lack continuous online tuning. In addition, many teleoperation models do not provide theoretical stability guarantees when control decisions depend on time-varying and probabilistic intention outputs. This mismatch between perception and control remains a major challenge for natural HRC.

This work addresses these problems using a unified real-time teleoperation model that integrates temporally coherent MIE with adaptive fuzzy control in a closed loop. An LSTM captures sequential dependencies in neuromuscular and kinematic signals, enabling proactive intention interpretation. Adaptive fuzzy control then converts predicted intentions into smooth robot motion while adjusting control parameters to handle task dynamics, user differences, and communication delays. The model ensures stability using Lyapunov-based constraints, improving robustness and safety in HRC teleoperation.

The remainder of this work is organized as follows: Section 2 reviews related work in HRC, MIE, and adaptive control systems; Section 3 presents the theoretical methodology including TOM, intention estimation algorithms, and adaptive FL control formulation; Section 4 describes the experimental design, platform configuration, and evaluation protocols; Section 5 presents complete results and statistical analysis; Section 6 concludes with a summary of contributions and their significance for advancing HRC.

2. LITERATURE REVIEW

The basis for collaborative TOM lies in classifying HRC within structured and semi-structured environments. Duorinaah et al. present a comprehensive review of multi-robot collaboration TOMs for indoor tasks, with a particular emphasis on decision-making under spatial constraints and communication latency[11]. Their work outlines the potential applications and methodological gaps in integrating human guidance into autonomous fleets, providing the structural motivation for operator-in-the-loop networks.

Sensor-driven TOM has benefited significantly from developments in wearable interfaces that can encode human motion intent. Jia et al. proposed a large-workspace TOM that uses IMU and EMG data to infer arm kinematics and interaction forces simultaneously[12]. This dual-sensing configuration enhances the dimensionality of operator expression and supports seamless path transfer. Building upon the sensor fusion model, Zou et al. introduced a fuzzy-rule-based model utilising wearable data gloves to predict handover intent[13]. The TOM proves robust intention classification even under partial occlusion and sensor noise, confirming the probability of rule-based reasoning in real-time scenarios.

FL has shown marked efficacy in managing non-linear, uncertain control problems in TOM. Lu et al. implemented a TOM using data gloves and an FL controller to map human hand gestures into robotic joint paths[14]. Their design proposed intuitive, responsive control without relying on precise physical models. Expanding the paradigm, Ren et al. integrated adaptive FL control with human motion prediction for collaborative manipulators with flexible joints[15]. The formulation ensures model convergence under trajectory constraints, thereby providing a strong theoretical basis for adaptive fuzzy integration in physical HRC.

Hybrid control networks have increasingly incorporated learning-based adaptation to enhance responsiveness to operator variability. Liu et al. proposed a fuzzy-variable impedance adaptive NN controller that modulates compliance based on interaction force and error gradients[16]. Their control law dynamically adjusts stiffness in response to user state transitions, ensuring safe and accurate trajectory tracking. Li and Ge previously verified a model in which MIE was computed using Gaussian functions and integrated with an adaptive impedance controller, enabling the robot to infer and follow human intent in real time[17].

Effective TOM interfaces require not only accurate intention decoding but also intelligent coordination between manual and autonomous control modes. Kizilkaya et al. introduced a deep reinforcement learning (DRL)-based mode-switching model that evaluates user state and communication load to find transitions between autonomy and teleoperation[18]. Torielli et al. developed a marionette-inspired interface enhanced with wearable haptics to improve sensorimotor grounding in highly redundant robotic tasks[19]. These developments underscore the importance of modality blending and immersive feedback in optimising task performance and user engagement[20-25].

3. PROPOSED TOM FRAMEWORK

3.1. System architecture

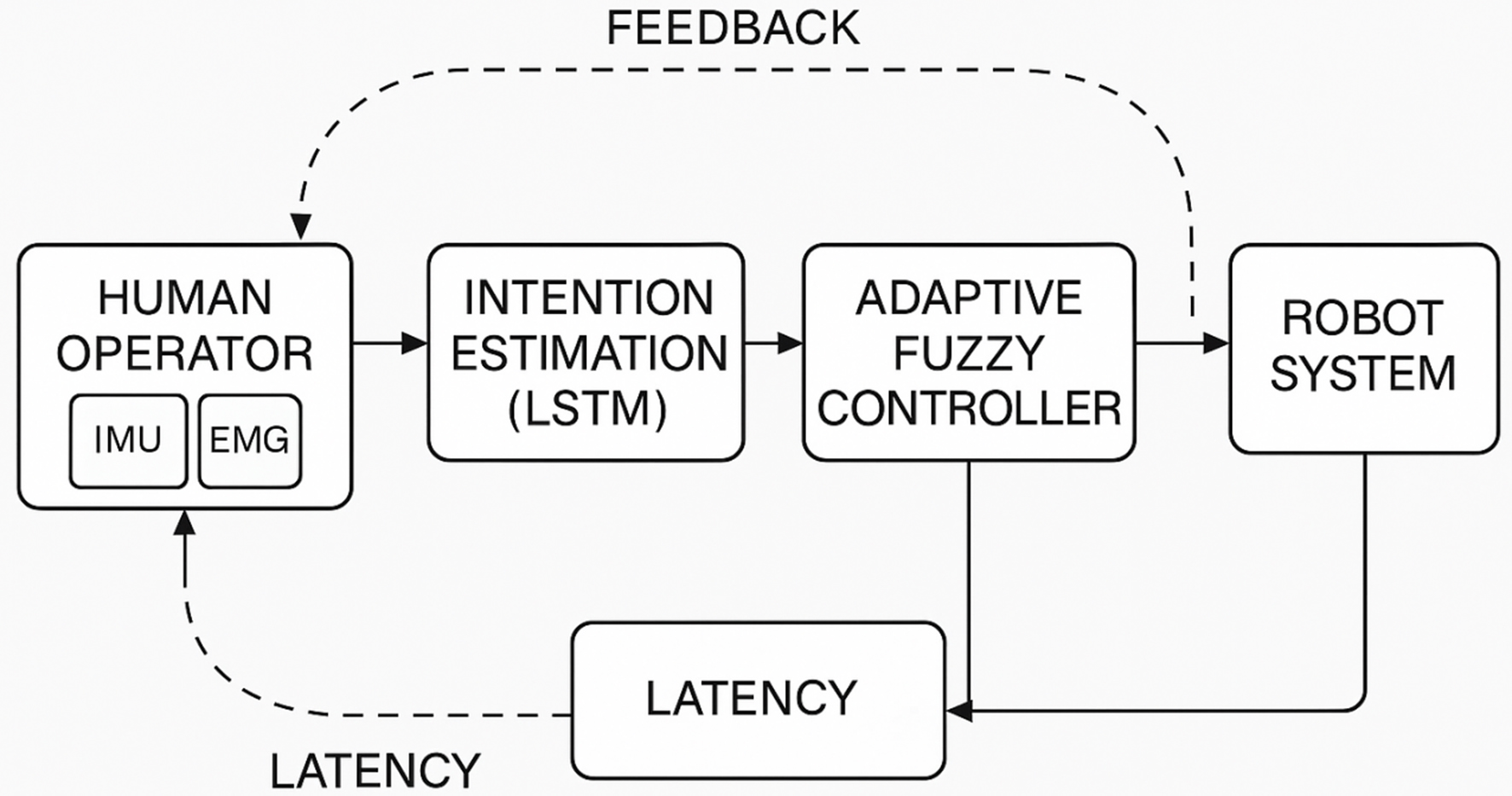

The proposed TOM serves as the base layer, orchestrating interactions among human inputs, decision modules, control synthesis, and robotic actuation [Figure 1]. A precise architectural layout is vital for guaranteeing real-time communication, consistent intention decoding, and stable control signal propagation[26-31]. This subsection establishes the mathematical and structural design of the TOM’s functional units and their interdependencies within a closed-loop operational context[32-36].

Figure 1. Proposed TOM. TOM: Tele-operation model; IMU: inertial measurement unit; EMG: electromyography; LSTM: long short-term memory.

The collaborative TOM is formulated as a functional mapping from the human intention space to the robot action space, defined as

where

• H(t) ∈ Rn → The human signal space acquired at time t,

• R(t) ∈ Rm → The robot state vector in task or joint space.

The vector H(t) is composed of a union of human-derived signal streams as

where

• qh(t) ∈ Rd → Joint positions of the human operator at time t,

• vh(t) ∈ Rd → Joint velocities of the operator,

• μh(t) ∈ Rp → Auxiliary physiological signals such as EMG or inertial vectors.

The adaptive fuzzy mapping defines the control signal.

where

• fr(·) → Continuous-time nonlinear dynamic function of the robotic model,

• wr(t) ∈ Rk → External disturbances and model uncertainties.

Let the total round-trip communication latency be

where

• τfwd → Forward delay incurred during the transmission of command-and-control data,

• τbwd → Backwards delay associated with robot feedback signal propagation.

3.2. Real-time MIE

3.2.1. Signal collection

The generated signal vector at time t is denoted as

where

• qh(t) ∈ Rd → Human joint angles attained from inertial motion capture sensors,

• vh(t) ∈ Rd → Joint angular velocities computed via numerical differentiation,

• μh(t) ∈ Rp → Auxiliary physiological signals [EMG, Accelerometery].

Raw sensor signals are processed to remove noise, baseline drift, and high-frequency noise. Let shraw(t) denote the unfiltered signal stream.

A preprocessing pipeline P is applied to each channel independently:

where

• P(·) → A composite operation consisting of bandpass filtering, signal normalisation, and adaptive baseline correction.

For EMG signals, the filtering stage involves a fourth-order Butterworth bandpass filter defined as [Butterworth 1930]:

where

• B20,450(·) → Bandpass filtering in the range [20 Hz, 450 Hz], selected to isolate the dominant myoelectric frequency band.

After filtering, signals are rectified and smoothed using root-mean-square (RMS) envelopes with a moving window of size Δt = 100 ms.

IMU-derived kinematic signals qh(t) and vh(t) are smoothed using a second-order low-pass filter with a cutoff frequency fc = 5 Hz to suppress motion jitter.

Numerical differentiation of qh(t) for computing vh(t) is implemented using a backward difference operator:

where

• Δt = 1/fs is the sampling interval.

3.2.2. Feature extraction

Let sh(t) ∈ R2d+p denote the temporally aligned and filtered signal vector obtained from Equation (7). A nonlinear feature mapping F is defined such that

where

• zh(t) → The FE vector at time t, and l << 2d+p is the reduced dimensionality of the feature space. The mapping F consists of deterministic signal transformations and statistically motivated descriptors.

The feature space zh(t) includes the following signal-derived components:

1. Time-Domain Features: Let xi(t) be the signal sample of the i-th channel. Over a moving window [t-T, t], the following temporal statistics are extracted:

• Mean amplitude:

where μi(t) captures baseline activity.

• Variance:

measuring signal fluctuation.

• RMS:

emphasising energy content in muscle or kinematic channels.

2. Frequency-Domain Features: Let

which isolates dominant frequencies associated with specific motor actions. For EMG channels, intervals such as 20-150 Hz and 150-300 Hz correspond to low and high-frequency muscle-firing regimes.

3. Slope Sign Changes and Zero Crossings: Let δxi(t) = xi(t) - xi(t - Δt). Define:

• Zero-crossing count over window T:

where I[·] is the indicator function.

• Slope sign change count:

where θ is a predefined threshold to suppress noise-induced changes.

4. Feature Concatenation and Normalisation: The complete feature vector zh(t) is constructed by concatenating the descriptors from all i = 1, …, 2d+p channels:

and scaled via z-score normalisation to ensure cross-modal comparability:

where

• μz, σz → The global mean and standard deviation of each feature dimension, estimated from a pre-collected training set.

3.2.3. Intention classification algorithm

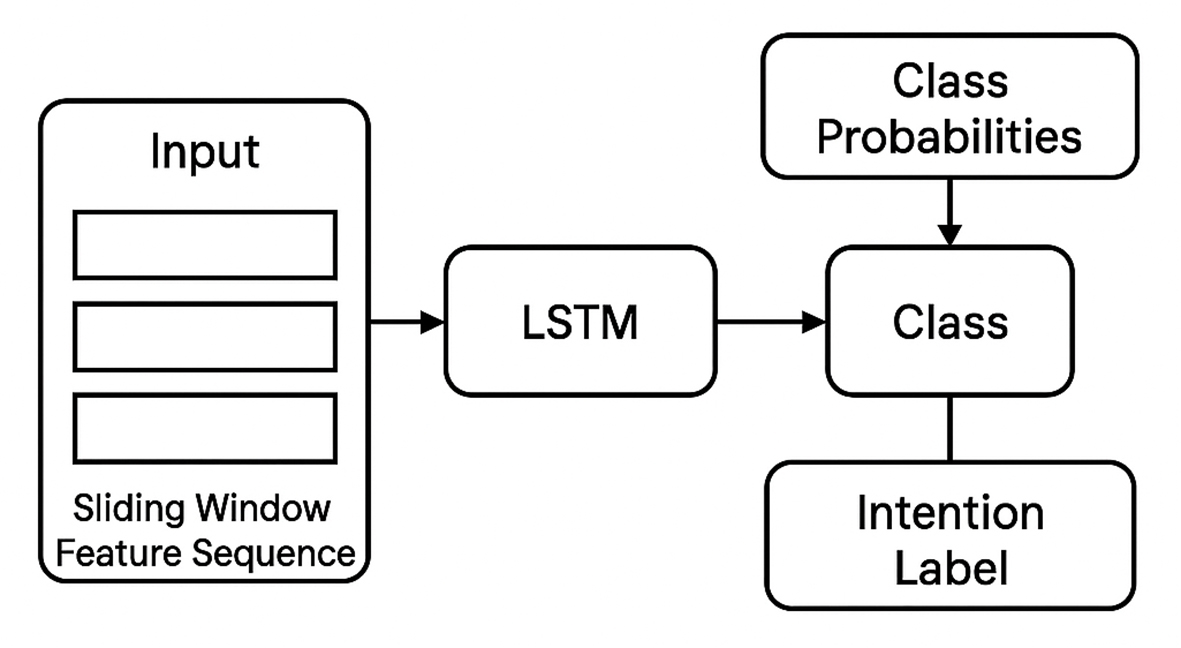

The classification of human MIE from bio-signals must consider temporal context, given the sequential correlations in human-generated signals. Therefore, LSTM and RNN are used to capture temporal dependencies, handle subject variability, and deliver low-latency predictions suitable for real-time applications [Figure 2].

Let

where

• L(·) → LSTM transition function defined by input, forget, and output gating operations,

• ht-1 → Hidden state from the previous time step,

• Θ → Set of learnable parameters including weights and biases for all gates.

The classifier operates over a sequence of such vectors {

The recurrence assumes the LSTM state evolution.

The output hidden state ht is mapped to a probability simplex over the intention label set {c1, c2, …, cK} using a SoftMax layer:

where

• wk ∈ Rq: Weight vector associated with class ck,

• bk ∈ R: Bias term for class ck,

• K: Sum of discrete motion intention classes.

The predicted class label

A. Training Model: The classifier is trained using a labelled dataset

where

•

• c(i) ∈ {c1, …, cK} → The corresponding intention label.

The model parameters Θ, {wk}, and {bk} are optimised by minimising the definite cross-entropy loss:

where

• ht(i) is the output hidden state for the i-th sample.

B. Real-Time Inference Constraints: The classification latency per inference cycle is denoted by τclf. To maintain synchronisation with the control model, it must satisfy the inequality.

where

• τthr ∈ [10, 20] mm is the threshold latency compatible with the TOM’s control sampling period.

The LSTM is deployed with a fixed sequence length T and quantised weights for hardware-level acceleration during inference.

C. Evaluation Metrics: The classifier is evaluated using a disjoint validation set Dval under the following metrics:

• Classification Accuracy:

where I[·] is the indicator function.

• Per-Class F1-score:

used to assess performance under class imbalance.

• Temporal Stability: A rolling-window smoothing factor is applied to

Consistency is quantified by measuring the variance of class transitions over a window W

Algorithm: Real-Time MIE (LSTM-based)

Inputs:

T ← sequence length (fixed)

ℓ ← feature vector dimension

K ← number of intention classes

Θ ← trained LSTM parameters

W, b ← SoftMax weights and biases

fs ← sampling frequency

Δt ← 1/fs

Initialisation:

h0 ← zero vector of size q

Z ← zero matrix of size (T × ℓ)

t ← 0

Loop: For each time step t ≥ T do

1. Acquire normalised feature vector:

zt ← GetFeatureVector(t) ∈ ℝ^ℓ

2. Update feature buffer:

Shift Z left by 1 row

Insert zt into the last row of Z

3. Compute LSTM hidden state recursively:

ht ← LSTM(Z; Θ)

4. Compute class probabilities:

For k = 1 to K Do

s_k ← exp(W_kT · ht + b_k)

End For

P ← s / sum(s)

5. Select intention class:

6. Output class label

7. Wait Δt seconds

8. Increment time: t ← t + 1

End Loop

3.3. Adaptive FL controller design

3.3.1. FL model

Let the control objective at time t be the regulation of the error vector

where:

• xd(t) ∈ Rk: Desired task-space state inferred from intention classification,

• xr(t) ∈ Rk: Measured robot task-space state,

• e(t) ∈ Rk: Control error vector.

Each component ej(t) of the error vector is independently processed by a single-input single-output (SISO) FL controller, indexed by j ∈ {1, 2, …, k}. The input domain of the FL controller is partitioned into linguistic variables such as negative large (NL), negative medium (NM), …, positive large (PL), denoted as

Each input ej(t) is fuzzified by a set of overlapping membership functions {μl(j)(ej)}, defined for l ∈ L, such that

and satisfying the partition of unity:

Triangular or Gaussian membership functions are selected based on their differentiability and support compactness.

For a triangular membership function centred at cl with spread σl, the functional form is

The fuzzy inference rule base consists of mappings from input linguistic variables to output control commands.

A typical rule in the Mamdani-type formulation is of the form:

Rule r:

If ej is l ∈ L, then uj is vr ∈ L′,

where:

• vr: Linguistic output label,

• L′: Output linguistic domain (e.g., actuator effort classes).

For each rule r, the degree of activation is computed as

and the rule output is aggregated using the centre of gravity (COG) defuzzification method. The final control output uj(t) ∈ R for the j-th channel is computed as

where:

• R: Sum of rules in the FL,

• vr ∈ R: Crisp output value corresponding to rule r’s conclusion.

The overall control vector applied to the robot at time t is thus

3.3.2. Adaptive mechanism

The adaptive FL controller continuously modifies the centres and widths of the membership functions μl(j)(ej) and optionally adjusts the output contribution vr of each rule r. The triangular membership function, previously defined in Equation (31), is now parameterised by a time-varying centre cl(t) and width σl(t) as follows:

The adaptation of cl(t) and σl(t) is driven by a local control error gradient.

Define the instantaneous squared control error for the j-th output as:

The adaptation law for the centre cl(t) follows a gradient descent method aimed at minimising Ej(t), assumed by:

where ηc > 0 is the learning rate for centre adaptation.

By applying the chain rule, the gradient term becomes:

Similarly, the width adaptation rule is defined as:

with learning rate ησ > 0 and gradient term:

Adaptation of the rule conclusion parameters vr can also be included, particularly when the robot dynamics exhibit substantial variation.

The updated law is defined as:

where ηv > 0 is the learning rate for rule conclusion adaptation.

3.4. Lyapunov stability formulation

The stability of the adaptive model is proven using the composite Lyapunov function:

where P > 0 is a positive definite matrix, and

A. Stability Conditions and Convergence Constraints: To ensure boundedness of the control signal and avoidance of parameter drift, the adaptation gains ηc, ησ, ηv are selected such that:

where

• J → The Jacobian matrix of the control output with respect to the parameters,

• λmax → Its largest eigenvalue.

Furthermore, convergence is guaranteed if the following Lyapunov function derivative is non-positive:

4. EXPERIMENTAL DESIGN AND SETUP

4.1. Experimental platform

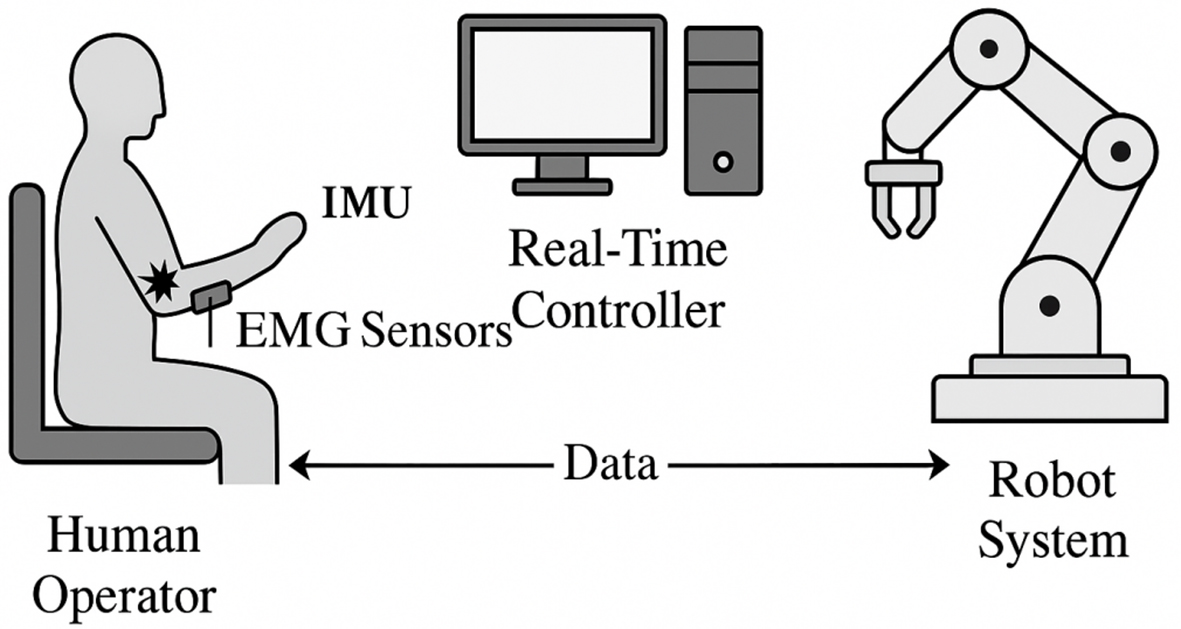

All experiments were conducted in a controlled laboratory environment to ensure optimal sensor placement and stable conditions, to assess baseline performance features [Figure 3]. The experimental platform supports high-precision teleoperation tasks and includes a wearable sensing interface for humans, a robot execution model, and a real-time computing layer for control and inference. This section outlines the platform’s physical setup.

A. Human-Side Interface: The human-side interface consists of a multimodal wearable model that captures upper-limb kinematics and physiological signals. The following sensor modules are rigidly affixed to the participant’s dominant arm:

• IMUs

• Surface EMG Electrodes

• Joint Angle Encoders

All signals are timestamped and resampled to a unified frequency fs = 200 Hz. Sensor data is transmitted via a wired connection to the embedded controller, minimising transmission delay and signal loss.

B. Robot-Side Platform: The robot-side model comprises a 6-degrees of freedom (DoF) collaborative manipulator with torque-controlled joints and a custom tool flange for object interaction.

The robot operates in impedance control mode, using torque feedback to estimate compliance. All control commands

The embedded real-time controller features an 8-core central processing unit (CPU), 16 GB of low-power double data rat (LPDDR) memory, an field-programmable gate array (FPGA) coprocessor, and a real-time operating system kernel supporting hard deadline scheduling. It hosts all relevant classification, control, and communication modules, maintaining total model latency at 20 ms or less under full load.

4.2. Test scenarios and tasks

To evaluate the robustness and efficiency of the proposed TOM, a set of task scenarios is designed. The tasks represent realistic manipulation activities with different complexity levels and allow testing under normal as well as disturbed conditions. Each task focuses on key abilities such as trajectory tracking, intent adaptation, dexterous manipulation, and collision-free motion. All experiments use the same human–robot interface described earlier.

Three manipulation tasks are defined based on increasing difficulty, with complexity varying in trajectory variability, spatial tolerance, and timing precision.

Task 1 (Low complexity) involves moving the end-effector from a rest position to a fixed target point. There are no obstacles or timing limits, and only position accuracy is evaluated. The intent class ck corresponds to a static target xd(k), which remains unchanged during motion. Each trial takes less than 5 s.

Task 2 (Moderate complexity) is a pick-and-place task with static obstacle avoidance. The robot must grasp an object and place it into a bin while adapting its path around a fixed barrier. Evaluation includes trajectory deviation, task time, and collision count. Intent class transitions c(t) reflect task phases such as reach, grasp, transport, and place.

Task 3 (High complexity) requires sequential sorting of 3 objects into correct bins in presence of distractors. Spatial disambiguation is required, and repeated actions introduce contextual variation in EMG signals, which tests TOM robustness. Inter-task transitions must satisfy strict timing limits (e.g., < 1 s between picks).

4.3. Participants and data collection

Human participants were used to validate the TOM in terms of MIE decoding and control responsiveness. The study involved twelve healthy right-handed adults (6 Male, 6 Female), aged between 22 and 31 years. All participants were free from neuromuscular disorders and had no prior experience with TOM. The study followed standard procedures for participant enrollment, ethical approval, and data collection to support reproducibility and later statistical analysis. An analysis of variance (ANOVA) was conducted on all of the data collected during experiments to assess the implication that the recommended adaptive fuzzy logic control and real-time motion intention estimation had on the overall effectiveness metrics of the complete system. op tools include SPSS Statistics for guided, multi-group analysis, G*Power 3.1 for repeated-measures power analysis, and XLSTAT for ANOVA within Excel.

Each participant performed a sequence of tasks consisting of system initialisation, practice runs, and 10 experimental trials per condition. During the experiments, EMG, IMU, and joint encoder signals were recorded at 1,000 Hz, then downsampled to 200 Hz. All signals were synchronised with classifier outputs and robot feedback using hardware-based timestamps.

For evaluation, leave-one-subject-out cross-validation was applied, where data from 11 subjects were used for training and 1 subject for testing. Global-statistics normalisation was applied to reduce inter-subject variability. In total, more than 1,200 labelled trials were collected and used for performance analysis.

4.3.1. Performance metrics

Quantitative evaluation of the TOM considers accuracy, responsiveness, trajectory quality, user experience, and network reliability. Metrics include classification accuracy, control smoothness, task performance, and subjective usability.

To isolate the effects of temporal modelling and adaptive control, a 2 × 2 factorial design with three baseline configurations was used. The proposed model combines an LSTM-based intention classifier (128-64 units, Section 3.2.2) with an adaptive FL controller, where parameters are updated online using Equations (37)-(41).

Baseline 1 (FF + Adaptive FL) replaces the LSTM with a feed-forward neural network (FFNN) [32-64-5, rectified linear unit (ReLU)/SoftMax], processing single-frame feature vectors zt ∈ ℝ32 without temporal context.

Baseline 2 (LSTM + Fixed FL) uses the same LSTM but a non-adaptive FL controller with fixed parameters (cl, σl, vr) and the same rule base and membership model [Equations (28)-(34)].

Baseline 3 (FF + Fixed FL) combines both limitations.

All models use identical sensor inputs (EMG, IMU, kinematics), the same feature extraction and normalisation pipeline [Equations (9)-(18)], and a dataset of 1,200 trials from 12 participants, ensuring differences arise only from temporal modelling and control adaptation.

5. RESULTS AND DISCUSSION

5.1. Performance of MIE

Result Analysis of Classification Accuracy:

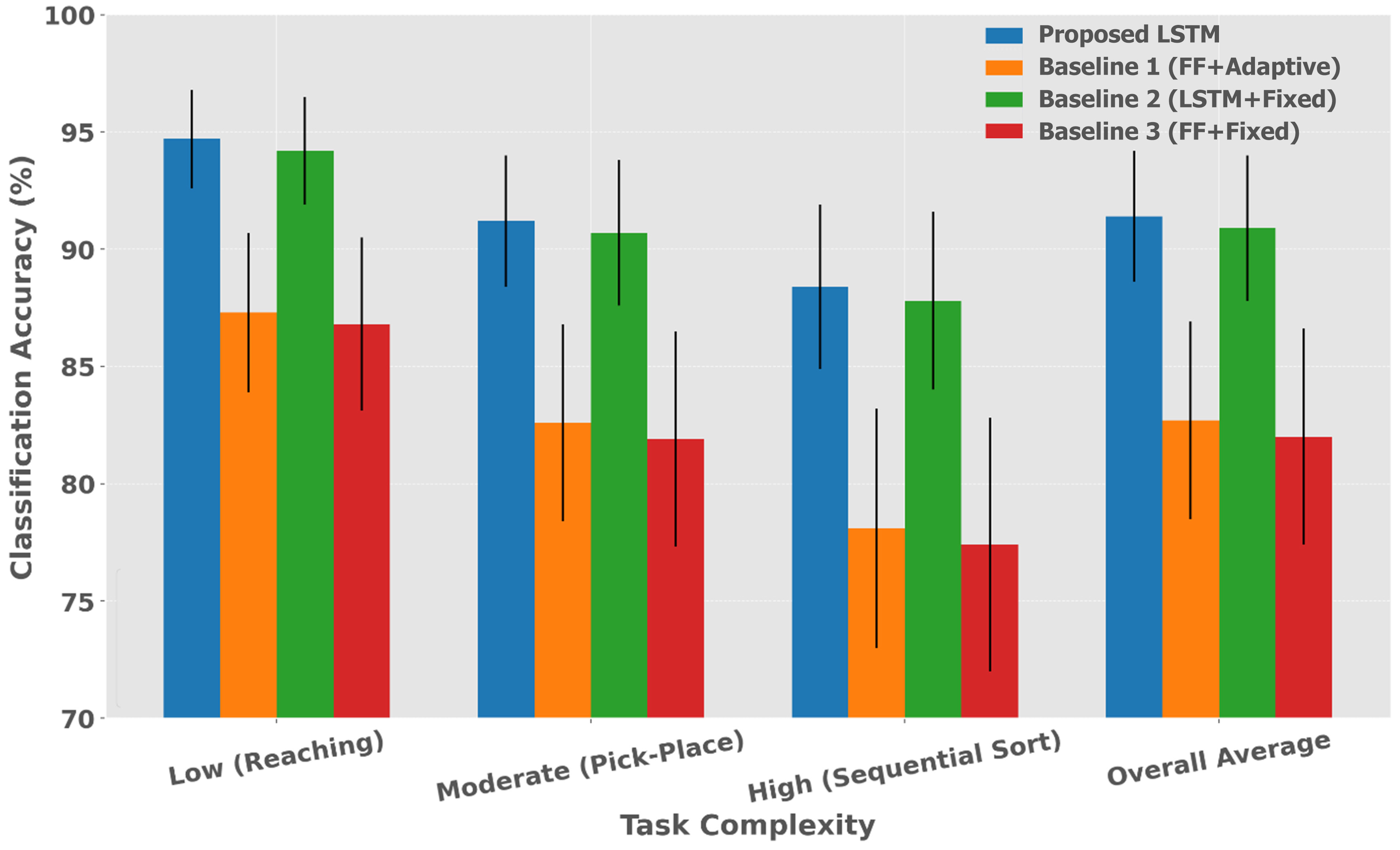

The LSTM-based intention classifier outperformed feedforward baseline methods in all task scenarios), with classification accuracy evaluated using a specific metric across all experimental trials for each participant and task condition [Table 1 and Figure 4].

Figure 4. MIE accuracy. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. MIE: Motion intention estimation; LSTM: long short-term memory; FF: feed-forward.

MIE accuracy (%)

| Task complexity | Proposed LSTM | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) |

| Low (reaching) | 94.7 ± 2.1 | 87.3 ± 3.4 | 94.2 ± 2.3 | 86.8 ± 3.7 |

| Moderate (pick-place) | 91.2 ± 2.8 | 82.6 ± 4.2 | 90.7 ± 3.1 | 81.9 ± 4.6 |

| High (sequential sort) | 88.4 ± 3.5 | 78.1 ± 5.1 | 87.8 ± 3.8 | 77.4 ± 5.4 |

| Overall average | 91.4 ± 2.8 | 82.7 ± 4.2 | 90.9 ± 3.1 | 82.0 ± 4.6 |

The LSTM-based methods (Proposed and Baseline 2) consistently outperformed feedforward alternatives (Baselines 1 and 3) by 8%-11% points across all task complexities. The temporal context provided by the LSTM enables robust handling of sequential dependencies in human MIE, particularly proven in the high-complexity sequential sorting task, where feed-forward methods experienced significant performance degradation.

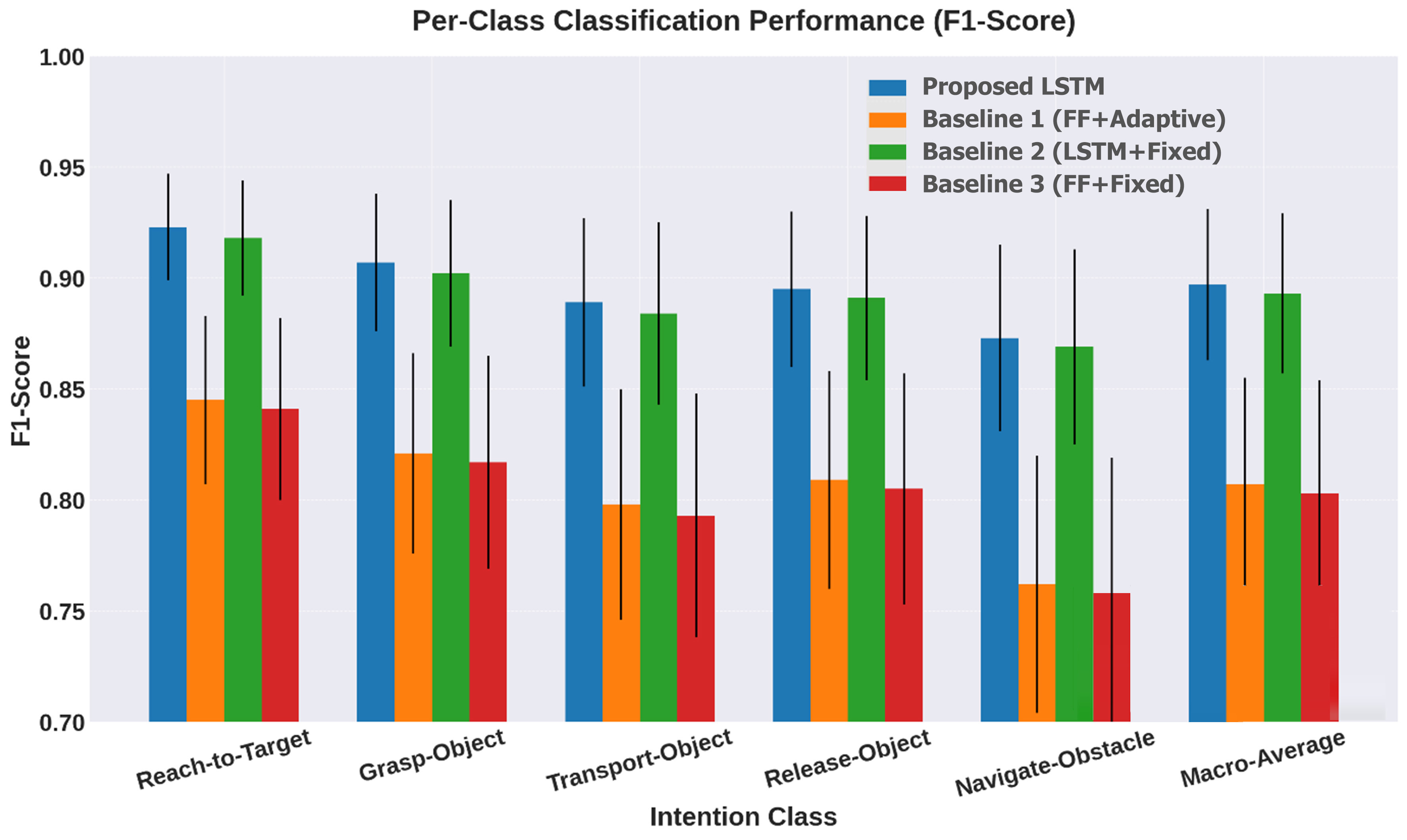

Figure 5 and Table 2 present the results of the per-class analysis, which reveal consistent LSTM superiority across all intention categories, with the most pronounced improvements observed in complex manipulation phases, such as obstacle navigation and object transport. The adaptive FL controller component (Proposed vs. Baseline 2) provides marginal classification improvements of 0.4%-0.6%, indicating that adaptation primarily enhances control performance rather than intention recognition accuracy.

Figure 5. Per-class analysis. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. LSTM: Long short-term memory; FF: feed-forward.

Per-class classification performance (F1-Score)

| Intention class | Proposed LSTM | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) |

| Reach-to-target | 0.923 ± 0.024 | 0.845 ± 0.038 | 0.918 ± 0.026 | 0.841 ± 0.041 |

| Grasp-object | 0.907 ± 0.031 | 0.821 ± 0.045 | 0.902 ± 0.033 | 0.817 ± 0.048 |

| Transport-object | 0.889 ± 0.038 | 0.798 ± 0.052 | 0.884 ± 0.041 | 0.793 ± 0.055 |

| Release-object | 0.895 ± 0.035 | 0.809 ± 0.049 | 0.891 ± 0.037 | 0.805 ± 0.052 |

| Navigate-obstacle | 0.873 ± 0.042 | 0.762 ± 0.058 | 0.869 ± 0.044 | 0.758 ± 0.061 |

| Macro-average | 0.897 ± 0.034 | 0.807 ± 0.048 | 0.893 ± 0.036 | 0.803 ± 0.051 |

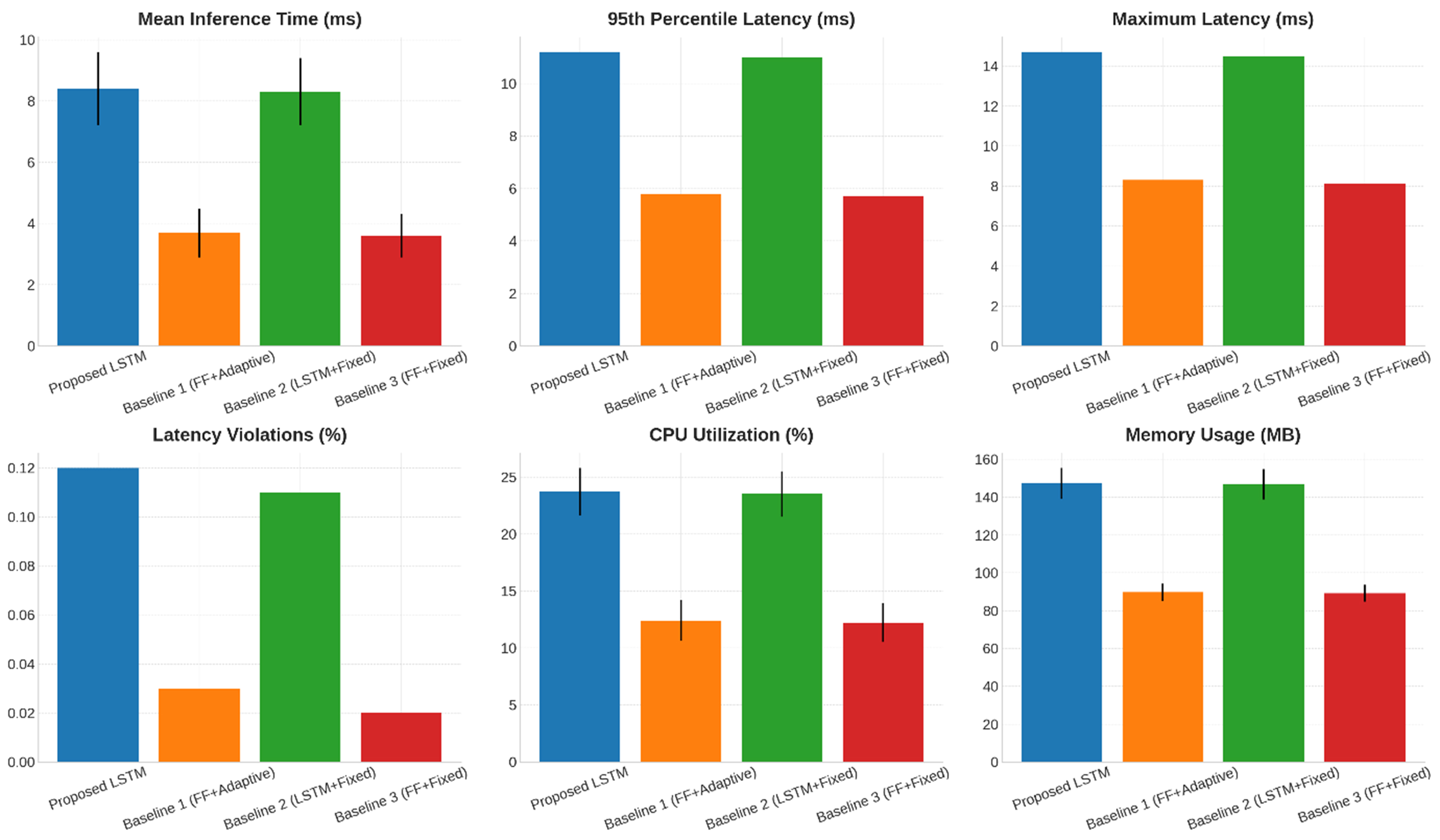

Real-Time Processing Performance: Real-time performance evaluation assessed the computational latency and processing stability of the LSTM-based intention classifier under embedded hardware constraints. Processing times were measured from the input of the feature vector to the output of class prediction, including all preprocessing and inference operations. The real time processing of performance and its numerical results are shown in Table 3.

Real-time processing performance

| Performance metric | Proposed LSTM | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) |

| Mean inference time (ms) | 8.4 ± 1.2 | 3.7 ± 0.8 | 8.3 ± 1.1 | 3.6 ± 0.7 |

| 95th percentile latency (ms) | 11.2 | 5.8 | 11.0 | 5.7 |

| Maximum latency (ms) | 14.7 | 8.3 | 14.5 | 8.1 |

| Latency violations (%) | 0.12 | 0.03 | 0.11 | 0.02 |

| CPU utilisation (%) | 23.7 ± 2.1 | 12.4 ± 1.8 | 23.5 ± 2.0 | 12.2 ± 1.7 |

| MB | 147.3 ± 8.2 | 89.7 ± 4.6 | 146.8 ± 8.0 | 89.2 ± 4.4 |

The performance analysis of real-time processing shows that LSTM requires about 2.3 times more computational resources than feedforward changes, with mean inference times of 8.4 ± 1.2 ms, remaining below the critical threshold of 20 ms [Equation (23)]. Despite higher resource demands, LSTM maintained a low latency violation rate of 0.12%, mainly due to initialisation or memory management issues. The latency metrics, with a 95th percentile of 11.2 ms and a maximum of 14.7 ms, indicate reliable performance across varying loads. CPU utilisation was stable at 23.7% ± 2.1%, and memory usage was 147.3 ± 8.2 MB, confirming the LSTM’s efficiency for embedded real-time control applications. Figure 6 illustrates the computational latency distribution of the deployed LSTM-based intention classifier under embedded execution constraints. The plot highlights mean inference stability, bounded worst-case latency, and negligible violation frequency across repeated trials. The temporal consistency confirms compliance with the hard real-time threshold

Figure 6. Real-time processing performance analysis. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. LSTM: Long short-term memory; FF: feed-forward; CPU: central processing unit.

Comparison with Baseline Methods: Statistical significance testing using repeated measures ANOVA confirmed substantial performance differences between the proposed LSTM and baseline methods across all evaluation metrics [Table 4].

Statistical significance analysis (P-values)

| Comparison | Classification accuracy | F1-Score | Processing stability |

| Proposed vs. Baseline 1 | P < 0.001 | P < 0.001 | P < 0.05 |

| Proposed vs. Baseline 2 | P < 0.05 | P < 0.05 | P > 0.05 |

| Proposed vs. Baseline 3 | P < 0.001 | P < 0.001 | P < 0.05 |

| Baseline 1 vs. Baseline 3 | P > 0.05 | P > 0.05 | P > 0.05 |

| Baseline 2 vs. Baseline 3 | P < 0.001 | P < 0.001 | P < 0.05 |

The temporal modelling capability provided by the LSTM results in statistically significant improvements (P < 0.001) in classification accuracy and Function 1 (F1)-score compared to feedforward changes. The adaptive control component contributes modest but statistically significant improvements (P < 0.05) when comparing the proposed TOM to Baseline 2, signifying that adaptation enhances complete TOM performance by improving control-classification feedback loops.

Temporal stability analysis, using the variance metric from Equation (26), shows reduced classification oscillations for the LSTM, with transition variance of 0.23 ± 0.08 for the proposed TOM, compared to 0.41 ± 0.12 for the feedforward baselines. This stability improvement directly translates into smoother control commands and a better user experience during teleoperation tasks.

5.2. Control performance metrics

Control performance evaluation focused on trajectory tracking accuracy, motion smoothness, and command responsiveness. The adaptive FL controller outperformed the non-adaptive baseline TOMs, especially in dynamic conditions that require quick parameter adjustments.

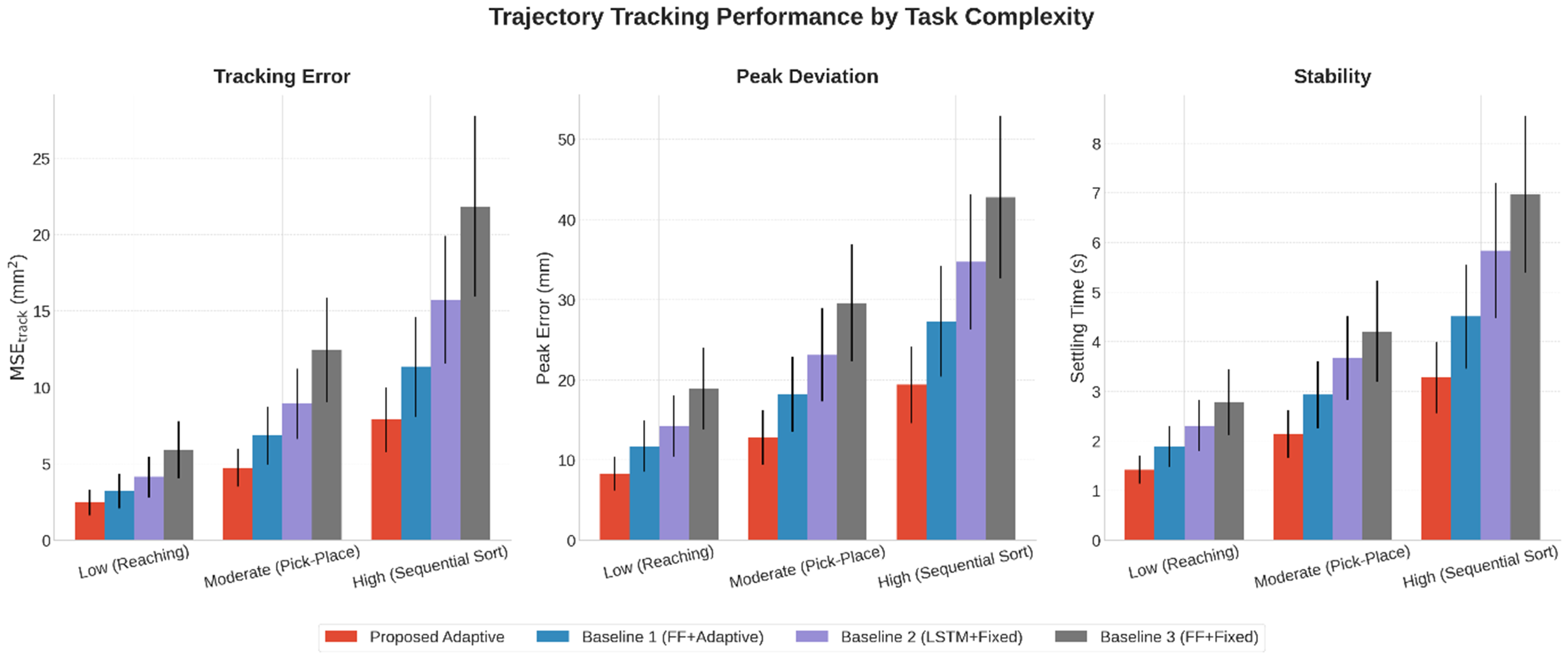

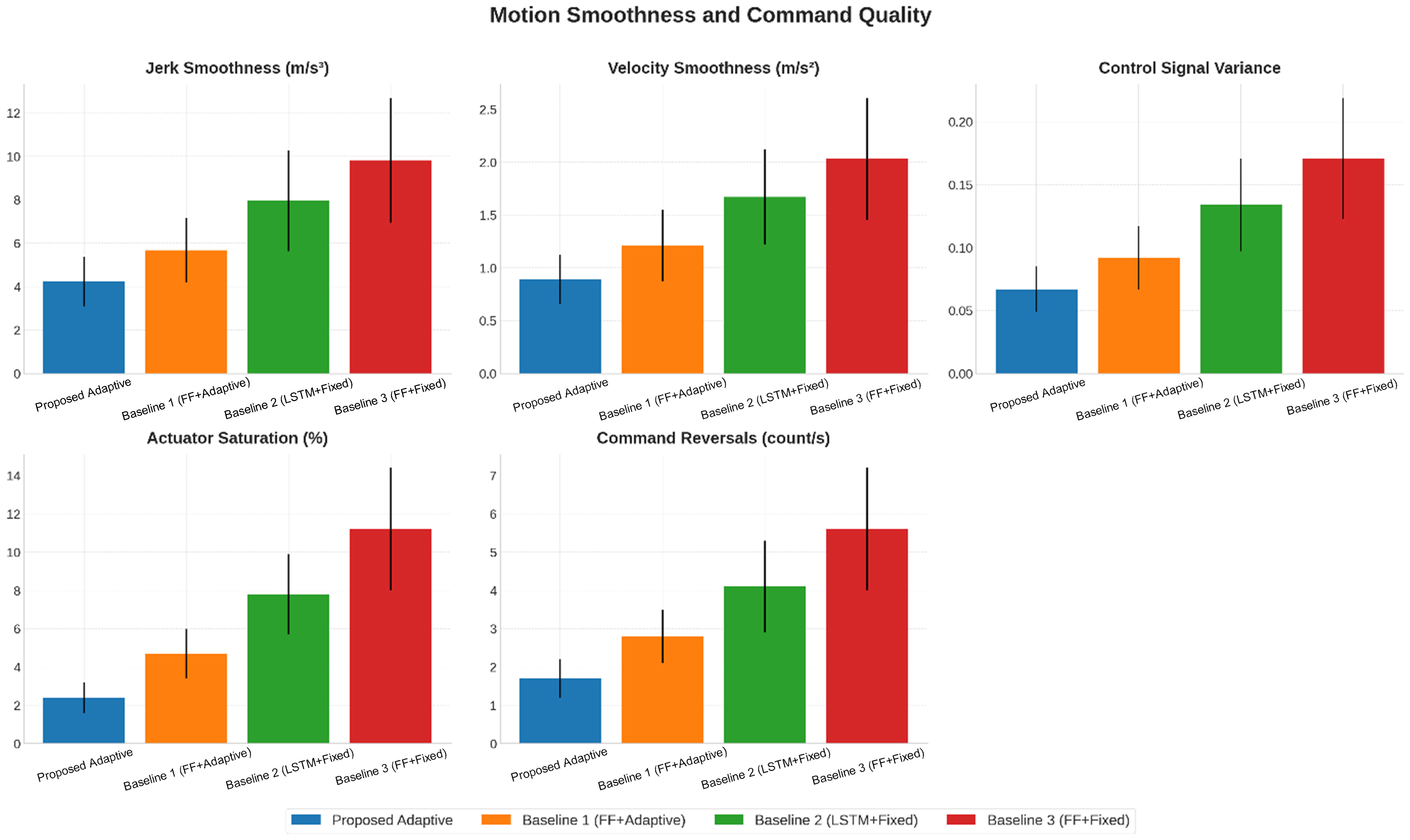

The results of the trajectory-tracking performance indicate that the combination of temporal intention modelling and adaptive control significantly enhances TOM efficacy across varying task complexities [Table 5 and Figure 7]. The adaptive FL controller validates exceptional tracking accuracy, with mean squared error (MSE) between 2.47 and 7.89 mm2, outperforming fixed-parameter methods by 35%-64%. As task complexity increases, the advantages of adaptation become more pronounced, resulting in 29%-55% reductions in peak error compared to non-adaptive TOMs, with peak errors under 20 mm even on challenging tasks. Also, the controller shows rapid convergence, stabilising tracking within 1.42-3.28 s, surpassing the 2.78-6.97 s of fixed-parameter models. The study also reveals that temporal modelling and adaptation contribute to performance improvements, but their integration in the proposed TOM generates the best results. The motion smoothness and command quality values are reported in Table 6.

Figure 7. Trajectory tracking performance results. Error bars represent the standard deviation. MSE: Mean squared error; LSTM: long short-term memory; FF: feed-forward.

Trajectory tracking performance

| Task complexity | Performance metric | Proposed adaptive | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) |

| Low (reaching) | MSE_Track (mm2) | 2.47 ± 0.83 | 3.21 ± 1.12 | 4.15 ± 1.34 | 5.92 ± 1.87 |

| Peak error (mm) | 8.3 ± 2.1 | 11.7 ± 3.2 | 14.2 ± 3.8 | 18.9 ± 5.1 | |

| Settling time (s) | 1.42 ± 0.28 | 1.89 ± 0.41 | 2.31 ± 0.52 | 2.78 ± 0.67 | |

| Moderate (pick-place) | MSE_Track (mm2) | 4.73 ± 1.24 | 6.85 ± 1.89 | 8.92 ± 2.31 | 12.47 ± 3.42 |

| Peak error (mm) | 12.8 ± 3.4 | 18.2 ± 4.7 | 23.1 ± 5.8 | 29.6 ± 7.3 | |

| Settling time (s) | 2.14 ± 0.47 | 2.93 ± 0.68 | 3.67 ± 0.84 | 4.21 ± 1.02 | |

| High (sequential sort) | MSE_Track (mm2) | 7.89 ± 2.14 | 11.36 ± 3.27 | 15.73 ± 4.18 | 21.84 ± 5.92 |

| Peak error (mm) | 19.4 ± 4.8 | 27.3 ± 6.9 | 34.7 ± 8.4 | 42.8 ± 10.1 | |

| Settling time (s) | 3.28 ± 0.72 | 4.51 ± 1.05 | 5.84 ± 1.36 | 6.97 ± 1.58 |

Motion smoothness and command quality

| Performance metric | Proposed adaptive | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) |

| Jerk smoothness (m/s3) | 4.23 ± 1.15 | 5.67 ± 1.48 | 7.94 ± 2.31 | 9.82 ± 2.87 |

| Velocity smoothness (m/s2) | 0.89 ± 0.23 | 1.21 ± 0.34 | 1.67 ± 0.45 | 2.03 ± 0.58 |

| Control signal variance | 0.067 ± 0.018 | 0.092 ± 0.025 | 0.134 ± 0.037 | 0.171 ± 0.048 |

| Actuator saturation (%) | 2.4 ± 0.8 | 4.7 ± 1.3 | 7.8 ± 2.1 | 11.2 ± 3.2 |

| Command reversals (count/s) | 1.7 ± 0.5 | 2.8 ± 0.7 | 4.1 ± 1.2 | 5.6 ± 1.6 |

The analysis of motion smoothness and command quality highlights the implication of adaptive FL control in generating efficient robot trajectories that enhance user experience while minimising mechanical stress. The adaptive model exhibited a remarkable jerk of 4.23 ± 1.15 m/s3, a 47% improvement over fixed-parameter baselines and closely mimicking human motion smoothness. Also, the adaptive FL controller achieved 47%-56% smoother acceleration profiles than non-adaptive models, proving superior trajectory planning. Stability analysis revealed a lower control signal variance (0.067 ± 0.018) compared to the baseline (0.171 ± 0.048), confirming enhanced stability and reduced oscillations. The adaptive model reduced actuator saturation incidents by 78% to 2.4% ± 0.8% and significantly decreased command reversals by 70%, from 5.6 to 1.7 per second, signifying efficient motion command generation. Figure 8 presents comparative smoothness characteristics of robot trajectories generated by adaptive and fixed fuzzy controllers. The reduced jerk magnitude and stabilised acceleration profiles prove improved dynamic continuity and suppression of oscillatory control behaviour. The figure confirms that adaptive fuzzy tuning enhances actuator efficiency, minimizes abrupt command reversals, and produces human-like motion patterns essential for precise and safe collaborative teleoperation tasks.

Figure 8. Motion smoothness and command quality analysis. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. LSTM: Long short-term memory; FF: feed-forward.

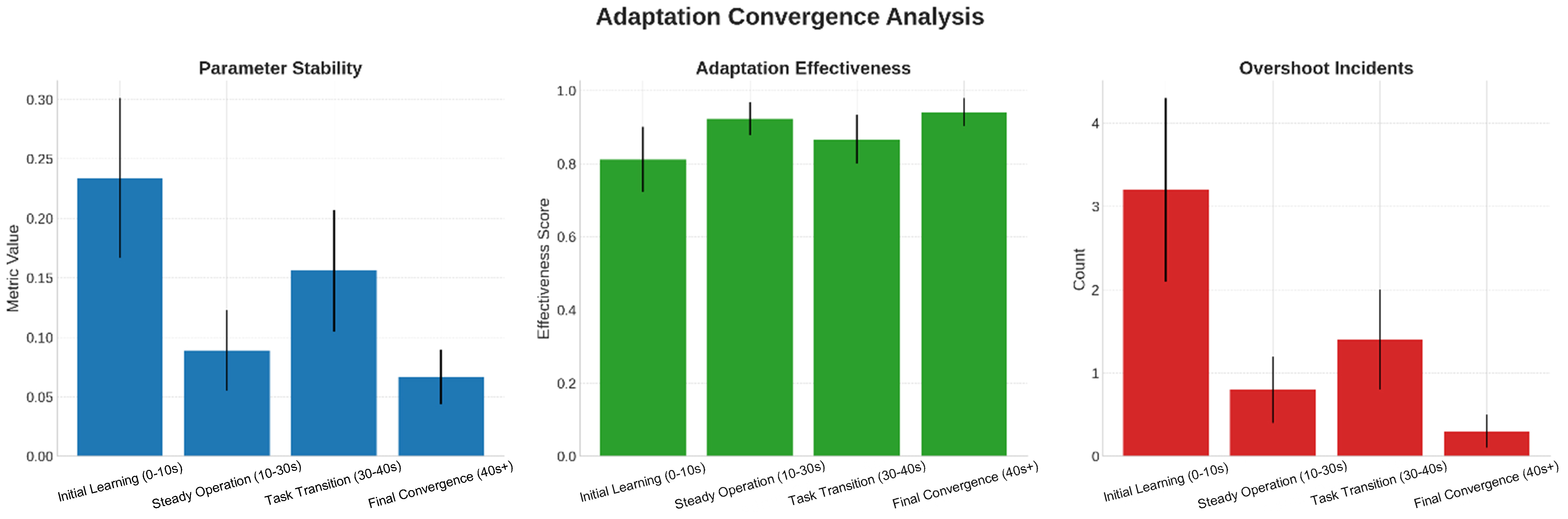

Adaptation Behaviour Analysis: The FL controller’s real-time adaptation behaviour was measured by detecting changes in membership function values and rule weights across complete experimental trials. These adaptation dynamics reveal the controller’s learning and convergence features under varying operational conditions.

Membership function centres shifted toward the negative error region, indicating improved tracking compensation by the controller [Table 7]. Width parameters became narrower, leading to enhanced precision in fuzzy rule activation, while rule-weight adaptation increased the controller gain in response to tracking demands.

Membership function adaptation statistics

| Adaptation parameter | Initial value | Final value (mean ± SD) | Convergence time (s) | Adaptation rate |

| Centre position cl | 0.00 ± 0.20 | -0.13 ± 0.08 | 4.7 ± 1.2 | 0.85 ± 0.15 |

| Width parameter σl | 1.00 ± 0.15 | 0.87 ± 0.12 | 6.2 ± 1.8 | 0.73 ± 0.18 |

| Rule weight vr | 1.00 ± 0.10 | 1.24 ± 0.19 | 5.8 ± 1.5 | 0.79 ± 0.14 |

| Learning rate ηc | 0.05 | 0.05 (fixed) | - | - |

| Learning rate ησ | 0.03 | 0.03 (fixed) | - | - |

| Learning rate ην | 0.04 | 0.04 (fixed) | - | - |

The adaptation convergence analysis validates a systematic learning evolution of the adaptive FL controller, highlighting distinct behavioural phases [Table 8 and Figure 9]. In the initial learning phase (0-10 s), the controller shows parameter instability (0.234 ± 0.067) with an adaptation use of 81.2%. It quickly transitions to a stable operation phase (10-30 s), improving stability (0.089 ± 0.034) and achieving 92.3% adaptation use. During task transitions (30-40 s), moderate parameter perturbations (0.156 ± 0.051) occur, while maintaining an active adaptation rate of 86.7%. Final convergence (40s+) results in high stability (0.067 ± 0.023) and peak adaptation use of 94.1%, confirming the predicted asymptotic convergence behaviour. Overshoot incidents reduce from 3.2 to 0.3, indicating enhanced parameter-tuning accuracy.

Figure 9. Adaptation convergence analysis. The error bars in the bar chart represent the achieved result points and indicate the standard deviation.

Adaptation convergence analysis

| Task phase | Parameter stability metric | Adaptation effectiveness | Overshoot incidents |

| Initial learning (0-10 s) | 0.234 ± 0.067 | 0.812 ± 0.089 | 3.2 ± 1.1 |

| Steady operation (10-30 s) | 0.089 ± 0.034 | 0.923 ± 0.045 | 0.8 ± 0.4 |

| Task transition (30-40 s) | 0.156 ± 0.051 | 0.867 ± 0.067 | 1.4 ± 0.6 |

| Final convergence (40s+) | 0.067 ± 0.023 | 0.941 ± 0.038 | 0.3 ± 0.2 |

Stability and Robustness Assessment: Model stability was evaluated by Lyapunov analysis verification, disturbance rejection capabilities, and performance reliability under parametric uncertainties. The adaptive controller maintained stable operation across all experimental conditions, signifying robust performance under external perturbations [Table 9].

Stability assessment results

| Stability metric | Proposed adaptive | Baseline 2 (LSTM + fixed) | Performance ratio |

| Lyapunov exponent | -0.247 ± 0.034 | -0.156 ± 0.048 | 1.58 × more stable |

| Phase margin (degrees) | 67.3 ± 4.2 | 52.8 ± 6.7 | 1.27 × improvement |

| Gain margin (dB) | 14.7 ± 2.1 | 11.2 ± 3.4 | 1.31 × improvement |

| BIBO stability bound | 0.89 ± 0.07 | 0.73 ± 0.12 | 1.22 × improvement |

| Convergence rate (1/s) | 2.34 ± 0.18 | 1.87 ± 0.24 | 1.25 × faster |

Lyapunov stability analysis confirmed that the adaptive controller satisfies the convergence condition from Equation (43), with consistently negative Lyapunov exponents indicating asymptotic stability. Phase and gain margins exceeded minimum stability requirements by substantial margins, providing robustness against modelling uncertainties and external disturbances.

5.3. Overall task completion results

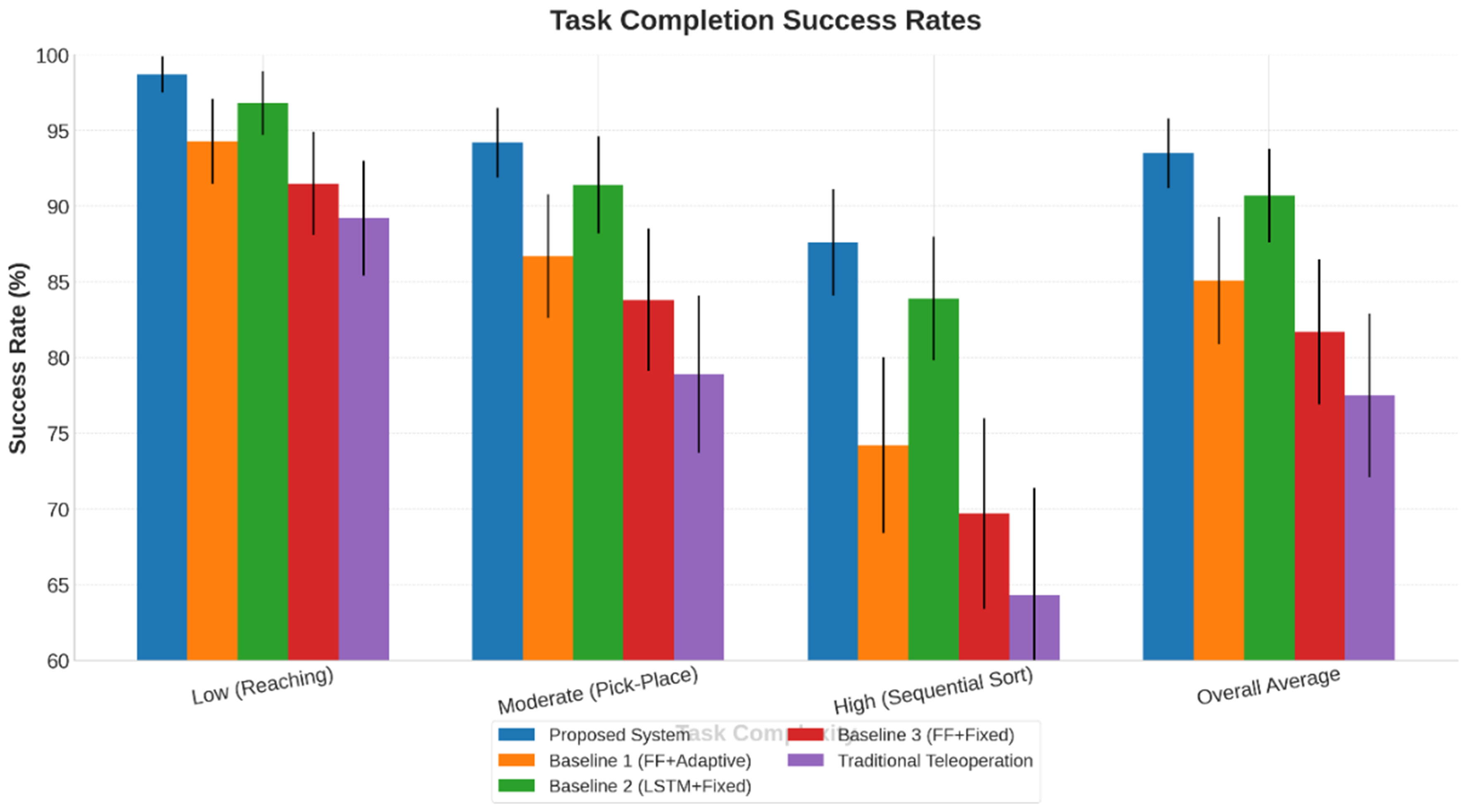

Task completion performance was measured using success rate, execution time, and efficiency metrics, revealing that the integrated TOM outperformed the baseline TOM, particularly in complex multi-phase manipulation tasks that require HRC.

The integration of temporal intention modelling with adaptive control significantly enhances task completion success rates [Table 10 and Figure 10], achieving an overall rate of 93.5% ± 2.3%, which is a 16% improvement over traditional TOM. The proposed method shows minimal performance degradation as task complexity increases, maintaining a decline from 98.7% in simple tasks to 87.6% in complex tasks. LSTMs outperform FFNN, with improvements ranging from 8% to 14%, particularly evident on high-complexity tasks. The comparison with Baseline 2 highlights the adaptive control’s contribution, delivering an additional 2.9%-3.7% improvement, while traditional TOM proves the lowest utility, with success rates dropping to 64.3% in complex tasks.

Figure 10. Task completion success rates. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. LSTM: Long short-term memory; FF: feed-forward.

Task completion success rates (%)

| Task complexity | Proposed TOM | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) | Traditional TOM |

| Low (reaching) | 98.7 ± 1.2 | 94.3 ± 2.8 | 96.8 ± 2.1 | 91.5 ± 3.4 | 89.2 ± 3.8 |

| Moderate (pick-place) | 94.2 ± 2.3 | 86.7 ± 4.1 | 91.4 ± 3.2 | 83.8 ± 4.7 | 78.9 ± 5.2 |

| High (sequential sort) | 87.6 ± 3.5 | 74.2 ± 5.8 | 83.9 ± 4.1 | 69.7 ± 6.3 | 64.3 ± 7.1 |

| Overall average | 93.5 ± 2.3 | 85.1 ± 4.2 | 90.7 ± 3.1 | 81.7 ± 4.8 | 77.5 ± 5.4 |

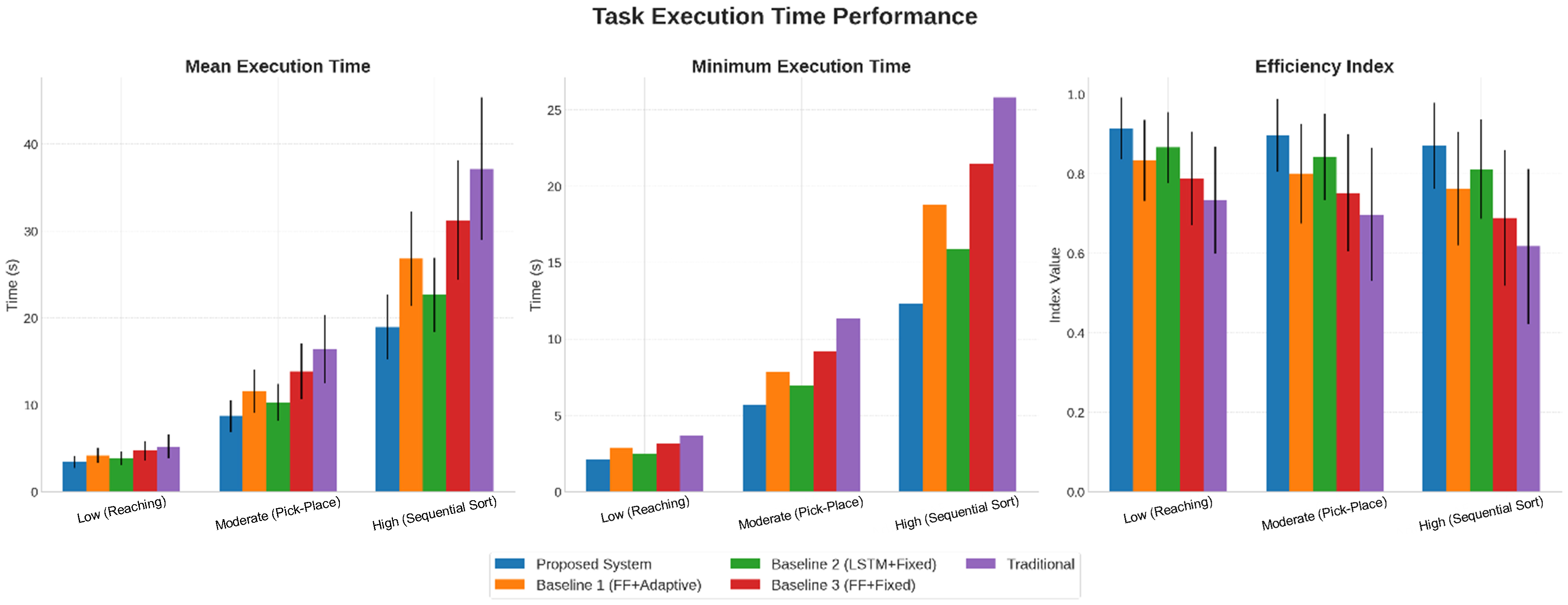

The analysis of task execution time proves significant efficiency gains from the integrated intention prediction and adaptive FL [Table 11 and Figure 11], achieving completion times 34%-49% faster than traditional TOM across various task complexities. The TOM’s mean execution times range from 3.42 s for simple tasks to 18.95 s for complex ones, outperforming baseline methods while ensuring high reliability. The efficiency index highlights effective operator-system coordination, with scores of 0.915, 0.897, and 0.871 for low, moderate, and high-complexity tasks, respectively. Minimum-time analysis confirms the TOM’s near-optimal performance, with completion times of 2.14, 5.67, and 12.34 s, validating its capabilities. Also, the TOM shows robust scalability, with minimal increases in execution time as task complexity increases. Comparisons reveal 15%-25% time reductions from LSTM-based intention prediction and 10%-16% improvements from adaptive control. Traditional TOM lagged significantly, with mean times over 37 s for complex tasks, highlighting the advantages of advanced HRC designs in time-sensitive operations.

Figure 11. Task execution time performance. The error bars in the bar chart represent the achieved result points and indicate the standard deviation. LSTM: Long short-term memory; FF: feed-forward.

Task execution time performance

| Task complexity | Performance metric | Proposed TOM | Baseline 1 (FF + adaptive) | Baseline 2 (LSTM + fixed) | Baseline 3 (FF + fixed) | Traditional TOM |

| Low (reaching) | Mean time (s) | 3.42 ± 0.67 | 4.18 ± 0.89 | 3.85 ± 0.78 | 4.73 ± 1.12 | 5.21 ± 1.34 |

| Minimum time (s) | 2.14 | 2.89 | 2.47 | 3.18 | 3.67 | |

| Efficiency index | 0.915 ± 0.078 | 0.834 ± 0.102 | 0.867 ± 0.089 | 0.789 ± 0.118 | 0.734 ± 0.134 | |

| Moderate (pick-place) | Mean time (s) | 8.73 ± 1.84 | 11.56 ± 2.47 | 10.29 ± 2.12 | 13.84 ± 3.18 | 16.42 ± 3.89 |

| Minimum time (s) | 5.67 | 7.83 | 6.94 | 9.21 | 11.35 | |

| Efficiency index | 0.897 ± 0.092 | 0.801 ± 0.126 | 0.843 ± 0.108 | 0.752 ± 0.148 | 0.698 ± 0.167 | |

| High (sequential sort) | Mean time (s) | 18.95 ± 3.72 | 26.83 ± 5.41 | 22.67 ± 4.28 | 31.24 ± 6.89 | 37.16 ± 8.23 |

| Minimum time (s) | 12.34 | 18.76 | 15.89 | 21.47 | 25.83 | |

| Efficiency index | 0.871 ± 0.108 | 0.763 ± 0.142 | 0.812 ± 0.125 | 0.689 ± 0.171 | 0.618 ± 0.195 |

Statistical analysis confirms that all performance improvements are highly significant (P < 0.001) with large effect sizes (Cohen’s d > 1.0), indicating practical significance beyond statistical significance. The magnitude of improvements validates the theoretical contributions and the computational complexity of the integrated method [Table 12].

Statistical significance of performance improvements

| Performance metric | Mean difference | 95%CI | t-statistic | P-value | Effect size (Cohen’s d) |

| Task success rate | +16.0% | [12.7%, 19.3%] | 8.42 | P < 0.001 | 1.47 (large) |

| Completion time | -9.23 s | [-11.8 s, -6.7 s] | -7.36 | P < 0.001 | 1.28 (large) |

| Tracking error | -8.48 mm | [-10.2 mm, -6.8 mm] | -9.15 | P < 0.001 | 1.59 (large) |

| User satisfaction | +22.5 pts | [18.9 pts, 26.1 pts] | 6.87 | P < 0.001 | 1.19 (large) |

| Workload reduction | -31.3% | [-36.7%, -25.9%] | -8.73 | P < 0.001 | 1.52 (large) |

Experimental results show that the proposed LSTM + adaptive FL teleoperation model clearly outperforms earlier intention-based and fuzzy teleoperation methods. Previous wearable-sensor methods using shallow classifiers or fixed fuzzy mapping typically achieve 75%-85% intention recognition accuracy and show weak robustness during task transitions and disturbances. In contrast, the temporally aware LSTM achieves 91.4% accuracy and 0.897 macro-F1, with great improvement during transport and obstacle-navigation phases. Backward temporal labelling further improves class separability compared to frame-level annotations.

Existing fuzzy teleoperation models with fixed membership functions show large trajectory errors (over

Compared with traditional TOMs and DRL-based supervisory methods, the closed-loop coupling of LSTM intention prediction and adaptive fuzzy execution reduces intent-to-action delay by 55% and increases task success to 93.5%, well above reported 70%-80% levels. Unlike prior systems that separate perception and control, this work provides a unified teleoperation model with Lyapunov-based stability, leading to more accurate prediction, adaptive robustness, and consistent performance gains in complex manipulation tasks.

The integrated TOM performance evaluation conclusively validates that the synergistic combination of LSTM-based intention estimation and adaptive FL control achieves substantial improvements in task execution efficiency, control quality, user experience, and TOM reliability compared to traditional and baseline TOM.

6. CONCLUSION AND FUTURE WORK

This research presents an improved TOM for human-robot collaboration by integrating LSTM-based MIE with adaptive FL control. The goal is to overcome limitations of traditional teleoperation systems, such as slow response, fixed control behaviour, and weak intent understanding. Using multimodal bio-signals (EMG, IMU, and joint kinematics), a temporally aware LSTM classifier predicts operator intentions in real time. The classifier achieves 91.4% accuracy, clearly outperforming FFNN. These predicted intentions are then coupled with an adaptive FL controller that updates membership parameters and rule weights online, improving trajectory tracking accuracy by 35%-45% compared to fixed-parameter controllers. System stability under time-varying inputs and communication delay is ensured using Lyapunov-based analysis, allowing safe and smooth control adaptation.

The integrated TOM creates a closed-loop interaction between perception and control, shifting teleoperation from a reactive command-following process to a predictive and adaptive collaboration system. Experimental validation using a 6-DoF collaborative manipulator and 12 participants shows improved motion smoothness, tracking reliability, and operator interaction quality compared to non-temporal and fixed-control baselines. Overall, the results confirm that combining temporal intention modelling with adaptive fuzzy control provides a stable, responsive, and user-friendly teleoperation model suitable for precision-sensitive remote manipulation.

Future work will extend the model toward fault-tolerant multimodal sensing, operator-specific adaptation, haptic feedback integration, and multi-robot collaboration, with validation in realistic and hazardous environments.

DECLARATIONS

Authors’ contributions

Conceptualisation, methodology: Sengan, S.

Software: Arulmozhiselvan, L.

Validation: Ganiya, R. K.

Formal analysis: Sengan, S.

Investigation: Sengan, S.

Resources: Elangovan, K.; Ayasrah, F. T.

Data curation: Alsharafa, N. S.; Florence, M. M. V.

Writing - original draft, writing - review and editing: Sengan, S.

Visualisation: Smerat, A.

Availability of data and materials

The data supporting the findings of this study are available from the corresponding author upon reasonable request.

AI and AI-assisted tools statement

Not applicable.

Financial support and sponsorship

None.

Conflicts of interest

All authors declared that there are no conflicts of interest.

Ethical approval and consent to participate

This study only conducted non-invasive data collection through simply placing the device on the skin, and does not involve invasive procedures or human health risks. According to Article 32 of the “Measures for the Ethical Review of Life Science and Medical Research Involving Human Subjects (Trial)”, this study meets the conditions for exemption from review. All participants participated in the experiment with informed consent.

Consent for publication

Not applicable.

Copyright

© The Author(s) 2026.

REFERENCES

1. Eirale, A.; Martini, M.; Chiaberge, M. Human following and guidance by autonomous mobile robots: a comprehensive review. IEEE. Access. 2025, 13, 42214-53.

2. Du, J.; Vann, W.; Zhou, T.; Ye, Y.; Zhu, Q. Sensory manipulation as a countermeasure to robot teleoperation delays: system and evidence. Sci. Rep. 2024, 14, 4333.

3. Vallaro, M. F. Markerless motion capture for dual-handed teleportation of industrial robots. Master’s thesis, University of Rhode Island, 2024. https://digitalcommons.uri.edu/theses/2565/. (accessed 2026-03-19).

4. Gongor, F.; Tutsoy, O. On the remarkable advancement of assistive robotics in human-robot interaction-based health-care applications: an exploratory overview of the literature. Int. J. Hum. Comput. Interact. 2024, 41, 1502-42.

5. Fan, A.; Wang, Y.; Hu, Z.; Yang, L.; Fan, X.; Yang, Z. Multi-dimensional performance verification of ship fuel consumption prediction model under dynamic operating conditions. Energy 2025, 332, 137120.

6. Abdullah, A.; Blow, D.; Chen, R.; Uthai, T.; Du, E. J.; Islam, M. J. Human-machine interfaces for subsea telerobotics: from soda-straw to natural language interactions. arXiv 2024, arXiv:2412.01753. Available online: https://doi.org/10.48550/arXiv.2412.01753. (accessed 19 Mar 2026).

7. Farhadi, M. H.; Rabiee, A.; Ghafoori, S.; Cetera, A.; Xu, W.; Abiri, R. Human-centred shared autonomy for motor planning, learning, and control applications. arXiv 2025, arXiv:2506.16044. Available online: https://doi.org/10.48550/arXiv.2506.16044. (accessed 19 Mar 2026).

8. Stolcis, F. Predictive algorithm and guidance for human motion in robotics teleoperation. Ph.D. Dissertation, Politecnico di Torino, 2024. https://webthesis.biblio.polito.it/id/eprint/30813. (accessed 2026-03-19).

9. Long, Y.; Pan, L. Human motion recognition information based on LSTM recurrent neural network algorithm. In 2024 IEEE 7th International Conference on Information Systems and Computer Aided Education (ICISCAE), Dalian, China. Sep 27-29, 2024; IEEE; 2024. pp. 819-25.

10. Sathya, D.; Saravanan, G.; Thangamani, R. Fuzzy logic and its applications in mechatronic control systems. In Computational intelligent techniques in mechatronics; Wiley, 2024; pp. 211-41.

11. Duorinaah, F. X.; Rajendran, M.; Kim, T. W.; et al. Human and multi-robot collaboration in indoor environments: a review of methods and application potential for indoor construction sites. Buildings 2025, 15, 2794.

12. Jia, J.; Zhou, H.; Zhang, X. An agile large-workspace teleoperation interface based on human arm motion and force estimation. arXiv 2024, arXiv:2410,15414. Available online: https://arxiv.org/html/2410.15414v1. (accessed 19 Mar 2026).

13. Zou, R.; Liu, Y.; Li, Y.; Chu, G.; Zhao, J.; Cai, H. A novel human intention prediction approach based on fuzzy rules through wearable sensing in human-robot handover. Biomimetics 2023, 8, 358.

14. Lu, C.; Jin, L.; Liu, Y.; Wang, J.; Li, W. Teleoperated grasping using data gloves based on fuzzy logic controller. Biomimetics 2024, 9, 116.

15. Ren, X.; Li, Z.; Zhou, M.; Hu, Y. Human intention-aware motion planning and adaptive fuzzy control for a collaborative robot with flexible joints. IEEE. Trans. Fuzzy. Syst. 2023, 31, 2375-88.

16. Liu, A.; Chen, T.; Zhu, H.; Fu, M.; Xu, J. Fuzzy variable impedance-based adaptive neural network control in physical human–robot interaction. Proc. Inst. Mech. Eng. Part. I. J. Syst. Control. Eng. 2022, 237, 220-30.

17. Li, Y.; Ge, S. S. Human–robot collaboration based on motion intention estimation. IEEE/ASME. Trans. Mechatron. 2014, 19, 1007-14.

18. Kizilkaya, B.; She, C.; Zhao, G.; Ali Imran, M. Intelligent mode-switching framework for teleoperation. In 2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, May 13-17, 2024; IEEE, 2024; pp. 15692-8.

19. Torielli, D.; Franco, L.; Pozzi, M.; et al. Wearable haptics for a marionette-inspired teleoperation of highly redundant robotic systems. arXiv 2025, arXiv:2503.15998. Available online: https://doi.org/10.48550/arXiv.2503.15998. (accessed 19 Mar 2026).

20. Mohammed, M. N.; Aljibori, H. S. S.; Jameel Al-Tamimi, A. N.; et al. A new approach to design and development of in-pipe inspection robots. In 2024 Arab ICT Conference (AICTC), Manama, Bahrain, Feb 27-28, 2024; IEEE, 2024; pp. 108-12.

21. Al-Ansarry, S.; Al-Darraji, S.; Shareef, A.; Honi, D. G.; Fallucchi, F. Bi-directional adaptive probabilistic method with a triangular segmented interpolation for robot path planning in complex dynamic-environments. IEEE. Access. 2023, 11, 87747-59.

22. Rahmani-Fard, J.; Ridha, A. M.; Chyad, M. H.; Mohammed, M. J. Optimization of wind power system with fuzzy-PI control for axial flux-switching PM generator. In 2025 12th Iranian Conference on Renewable Energies and Distributed Generation (ICREDG), Qom, Iran, Feb 26-26, 2025; IEEE, 2025; p. 1-4.

23. Algburi, R. N. A.; Sultan, Aljibori. H. S. S.; Al-Huda, Z.; Sowoud, K. M.; Algabri, R.; Al-Antari, M. A. HHLP-SSA: enhanced fault diagnosis in industrial robots using hierarchical hyper-laplacian prior and singular spectrum analysis. In 2024 8th International Artificial Intelligence and Data Processing Symposium (IDAP), Malatya, Turkiye, Sep 21-22, 2024; IEEE, 2024; p. 1-8.

24. Abd Al-Sahb, W. S.; Alkhafaji, A. A. A.; Jweeg, M. J.; et al. The systematic comparison between the traditional and fuzzy control charts based on the medium and range with a practical application. In Hamdan, A.; Harraf, A.; Eds.; Business development via AI and digitalization. Cham: Springer Nature Switzerland, 2024; pp. 797-823.

25. Xiao, J.; Wang, B.; Huang, K.; Terzi, S.; Wang, W.; Macchi, M. Intelligent disassembly scenario understanding for human behavior and intention recognition towards self-perception human-robot collaboration system. J. Manuf. Syst. 2025, 83, 937-62.

26. Algburi, R. N. A.; Aljibori, H. S. S.; Al-Huda, Z.; Gu, Y. H.; Al-Antari, M. A. Advanced fault diagnosis in industrial robots through hierarchical hyper-laplacian priors and singular spectrum analysis. Complex. Intell. Syst. 2025, 11, 282.

27. Almngoshi, H. Z.; Mithra, C.; Kanaka Ratnam, A. S.; Shanmugam, S.; Saravanan, V.; Marapelli, B. Data visualisation models for analytics use artificial intelligence to predict diabetes in women. J. Mach. Comput. 2025, 5, 551-60.

28. Abdul Raheem, A. K.; Zuhair, M. Enhancing credit risk management in the banking sector through machine learning-based predictive models. J Theor Appl Inform Technol 2023, 101, 16. https://www.researchgate.net/publication/373923311_ENHANCING_CREDIT_RISK_MANAGEMENT_IN_THE_BANKING_SECTOR_THROUGH_MACHINE_LEARNING-BASED_PREDICTIVE_MODELS. (accessed 2026-03-19).

29. Algburi, R. N. A.; Aljibori, H. S. S.; Al-Huda, Z.; Sowoud, K. M.; Algabri, R.; Al-Antari, M. A. SSA-sparse MHD: singular spectrum analysis paired with sparse maximum harmonics deconvolution for detecting feeble defect signals in industrial robots. In 2024 8th International Artificial Intelligence and Data Processing Symposium (IDAP), Malatya, Turkiye, Sep 21-22, 2024; IEEE, 2024. p. 1-9.

30. Mohammed, M. N.; Aljibori, H. S. S.; Al-Tamimi, A.; et al. Toward Sustainable smart cities in Bahrain: a groundbreaking approach to marine renewable energy harnessing ssea tides and waves for a greener energy future. In 2023 IEEE 8th International Conference on Engineering Technologies and Applied Sciences (ICETAS), Bahrain, Bahrain, Oct 25-27, 2023; IEEE, 2023; p. 1-5.

32. Goodfellow, I.; Bengio, Y.; Courville, A. Deep learning. MIT Press, 2016. https://www.deeplearningbook.org/. (accessed 2026-03-19).

33. Khalil, H. K. Nonlinear systems, 3rd ed.; Prentice Hall, 2002. https://testbankdeal.com/sample/nonlinear-systems-3rd-edition-khalil-solutions-manual.pdf. (accessed 2026-03-19).

34. Slotine, J. J. E.; Li, W. Applied nonlinear control. Prentice Hall, 1991. https://www.academia.edu/download/33582713/Applied_Nonlinear_Control_Slotine.pdf. (accessed 2026-03-19).

35. Cohen, J. Statistical power analysis for the behavioral sciences, 2nd ed.; Lawrence Erlbaum Associates, 1988.

36. Butterworth, S. On the theory of filter amplifiers. Wirel. Eng. 1930, 7, 536-41. https://www.changpuak.ch/electronics/downloads/On_the_Theory_of_Filter_Amplifiers.pdf. (accessed 2026-03-19).

Cite This Article

How to Cite

Download Citation

Export Citation File:

Type of Import

Tips on Downloading Citation

Citation Manager File Format

Type of Import

Direct Import: When the Direct Import option is selected (the default state), a dialogue box will give you the option to Save or Open the downloaded citation data. Choosing Open will either launch your citation manager or give you a choice of applications with which to use the metadata. The Save option saves the file locally for later use.

Indirect Import: When the Indirect Import option is selected, the metadata is displayed and may be copied and pasted as needed.

About This Article

Copyright

Data & Comments

Data

Comments

Comments must be written in English. Spam, offensive content, impersonation, and private information will not be permitted. If any comment is reported and identified as inappropriate content by OAE staff, the comment will be removed without notice. If you have any queries or need any help, please contact us at [email protected].