Applications of speech analysis in diseases’ assessment, prediction and diagnosis: a scoping review

Abstract

Background: Speech production is a coordinated physiological process and a vital digital biomarker for health assessment. Recent advances in artificial intelligence (AI), particularly in representation learning, have substantially expanded the application of speech analysis across diverse clinical domains.

Methods: This review was conducted in accordance with the Preferred Reporting Items for Systematic Reviews and Meta-Analyses Extension for Scoping Reviews (PRISMA-ScR). Five major bibliographic databases were systematically searched for studies published between 2015 and 2025. Eligible studies applied AI-driven speech analysis for clinical diagnosis or monitoring, while those lacking quantitative evaluation or sufficient methodological detail were excluded.

Results: A total of 124 studies were analyzed, covering neurological, psychiatric, and respiratory disorders. The field has transitioned from traditional machine learning with handcrafted features to deep learning and foundation models. Parkinson’s disease, Alzheimer’s disease, depression, and coronavirus disease 2019 (COVID-19) are the most frequently investigated conditions. The included studies were charted and synthesized to map disease coverage, methodological trends, and clinical application scenarios.

Conclusion: Speech analysis offers a non-invasive approach for early disease detection and remote monitoring in telemedicine. To support clinical translation, future research should prioritize model robustness and interpretability across diverse clinical populations.

Keywords

INTRODUCTION

Speech production constitutes a precisely coordinated physiological process. The larynx, vocal cords, oral cavity, nasal cavity, and other vocal organs, together with their subsystems, work in close coordination with respiratory muscle groups[1]. Functional abnormalities in any component directly manifest as alterations in speech patterns. Owing to this close coupling between speech production and physiological function, speech provides a unique, non-invasive, and information-rich window for disease assessment, prediction, and diagnosis. However, traditional speech assessment methods heavily rely on manual evaluation by specialized physicians. This approach is not only cumbersome and inefficient but also susceptible to subjective influences, leading to significant inter-observer variability and limited scalability in routine clinical practice.

In recent years, the rapid advancement of artificial intelligence (AI) technology has introduced new paradigms for disease assessment, prediction, and diagnosis[2]. As a result, speech has emerged as a highly promising digital health biomarker in the medical field. As an information-rich data source, speech possesses inherent advantages including ease of acquisition, non-invasiveness, and high information density, encompassing multi-dimensional features such as prosody, pitch, rhythm, and spectral characteristics. AI-driven analysis of speech signals enables sensitive and objective characterization of subtle acoustic changes that may reflect early disease manifestations, progression trajectories, or treatment responses. Speech characteristics such as loudness, pitch, speech rate, and pausing patterns are linked to emotional, cognitive, and pathological states[3]. These findings suggest that speech analysis supports not only diagnostic decision-making but also disease severity assessment and predictive modeling of health outcomes[4,5].

Driven by these methodological advances, speech-based approaches have been increasingly applied across a wide range of medical domains. In neurological disorders, speech analysis has been used for early screening, disease staging, and progression monitoring in Parkinson’s disease (PD) and Alzheimer’s disease (AD). In mental health, speech features have been explored for the assessment and prediction of depressive and anxiety disorders by modeling emotional tone, rhythm, and prosodic dynamics. In respiratory diseases, acoustic cues derived from speech, coughing, and breathing sounds have been investigated for disease screening and symptom monitoring in asthma and chronic obstructive pulmonary disease (COPD). Representative studies have demonstrated the feasibility and clinical relevance of these approaches across diverse disease contexts[6-8]. Collectively, these studies demonstrate that speech analysis has evolved beyond isolated diagnostic tasks to support longitudinal assessment, risk stratification, and outcome prediction across diverse clinical scenarios.

Despite these encouraging applications, existing research on speech-based disease analysis remains fragmented. Many studies focus on individual diseases, isolated feature representations, or specific modeling paradigms, while experimental settings, datasets, and evaluation protocols vary substantially. This fragmentation hampers cross-study comparability and limits the ability to derive unified insights into methodological robustness, clinical generalizability, and translational readiness across disease domains. As a result, there is a clear need for integrative syntheses that systematically connect speech features, AI methodologies, and clinical tasks across diseases.

From a technological perspective, AI methods for speech-based disease analysis have progressed from traditional machine learning (ML) to deep learning (DL), and more recently to foundation models. Traditional ML approaches, such as support vector machine (SVM)[9], random forest (RF)[10], and k-nearest neighbors (KNN)[11], rely on handcrafted acoustic features and demonstrate good interpretability on small-scale datasets[12,13]. However, manually engineered features often fail to adequately represent complex temporal dependencies and subtle pathological variations in real-world clinical speech analysis. This limitation is especially pronounced when analyzing spontaneous or continuous speech. DL models, including convolutional neural networks (CNNs)[14] and transformers[15], enable automated feature learning and have shown improved performance and robustness under noisy conditions[16]. Nevertheless, most DL approaches depend heavily on large labeled clinical datasets, which are difficult to obtain due to privacy constraints, annotation costs, and inter-patient heterogeneity. Recently, foundation models pretrained on large-scale unlabeled data [e.g., Generative Pre-trained Transformer (GPT)[17] and Bidirectional Encoder Representations from Transformers (BERT)[18]] have attracted increasing attention by enabling transferable representations, cross-task generalization, and data-efficient adaptation, thereby offering new opportunities for robust disease assessment and prediction in clinically constrained settings.

In parallel with these methodological developments, several review articles have summarized speech analysis research from different perspectives, including classical ML techniques, disease-specific applications, and early DL methods[19-24]. While these reviews provide valuable foundations, they typically focus on limited disease categories or earlier methodological stages. Recent breakthroughs since 2023, particularly those involving foundation-model-based pretraining (e.g., Whisper[25], wav2vec 2.0[26]) and cross-domain generalization, have not yet been systematically integrated. As summarized in Table 1, existing reviews vary substantially in disease coverage, time scope, and methodological focus, and few offer a unified synthesis that spans multiple disease categories while incorporating recent advances in foundation-model-based speech analysis. Accordingly, Table 1 provides a concise comparison between representative prior reviews and the present work in terms of disease scope, time scope, method scope, and primary focus, thereby clarifying the positioning and complementary contribution of this review.

Comparison between existing reviews and our review

| Reference | Disease scope | Time scope | Method scope | Focus |

| Idrisoglu et al., 2023[19] | Systematic conditions, nonlaryngeal aerodigestive disorders, and neurological disorders | 2012-2022 | ML | Cross-disease synthesis; machine learning focus |

| De Silva et al., 2025[20] | Neurological disorders | 2010-2022 | ML, DL | Clinical decision support; neurological focus |

| Hecker et al., 2022[21] | Neurological disorders | 2001-2021 | ML, DL | Acoustic features; traditional pipelines |

| Khaskhoussy and Ben Ayed, 2022[22] | Parkinson’s disease only | 2004-2022 | ML, DL | Early PD detection; speech features |

| Ding et al., 2024[23] | Alzheimer’s disease only | 2018-2023 | ML, DL | AD-focused analysis; datasets and challenges |

| Moell et al., 2025[24] | Speech disorders (cross-disease) | 2000-2023 | ML | Method taxonomy; speech classification |

| Our review | Neurological, psychiatric, and respiratory diseases | 2015-2025 | ML, DL, foundation models | Cross-disease synthesis; task-level perspective |

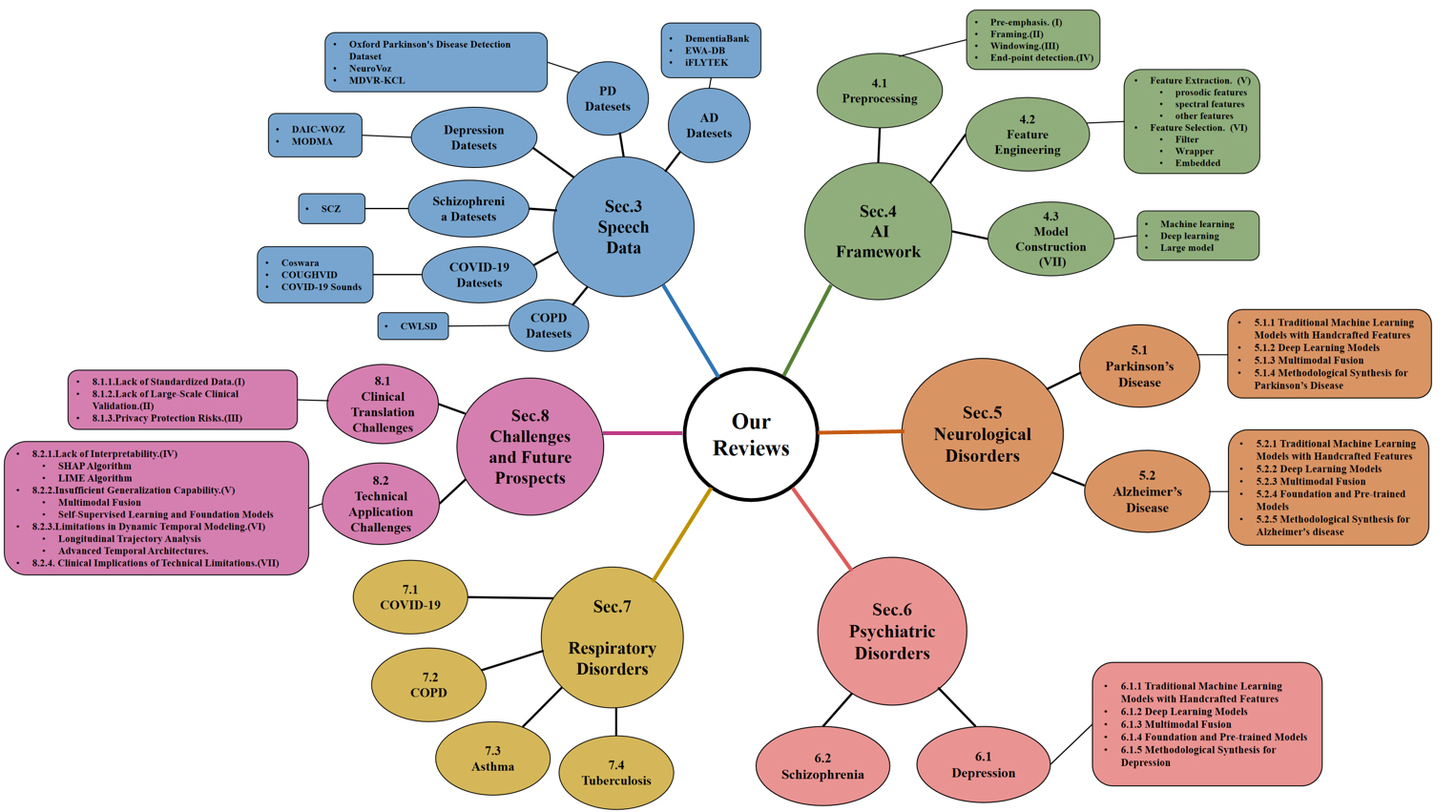

Motivated by the above gaps, this review aims to provide a comprehensive and up-to-date synthesis of speech analysis for disease assessment, prediction, and diagnosis. Our contributions are summarized as follows: (1) We synthesize AI-based speech analysis frameworks across major disease categories, highlighting the methodological evolution from handcrafted features to DL and foundation models. (2) We compare modeling strategies, datasets, and evaluation protocols across diseases to identify shared technical challenges and disease-specific characteristics. (3) We critically examine key barriers to clinical translation, including interpretability, robustness, and cross-population generalization, and outline future research directions. In addition, we introduce a conceptual framework that organizes disease domains, clinical tasks and methodological categories, as shown in Figure 1. This knowledge graph serves as an organizational framework to facilitate efficient navigation and cross-domain understanding of the review.

Figure 1. Conceptual framework of the review showing the organization of disease domains, clinical tasks and methodological categories. AI: Artificial intelligence; PD: Parkinson’s disease; AD: Alzheimer’s disease; COPD: chronic obstructive pulmonary disease; COVID-19: coronavirus disease 2019; SCZ: Schizophrenia; SHAP: SHapley additive explanations; LIME: local interpretable model-agnostic explanations; MDVR-KCL: Mobile Device Voice Recordings at King’s College London; EWA-DB: early warning of Alzheimer speech database; DAIC-WOZ: Distress Analysis Interview Corpus-Wizard of Oz; MODMA: Multi-modal Open Dataset for Mental-disorder Analysis; CWLSD: Chest Wall Lung Sound Database.

METHOD

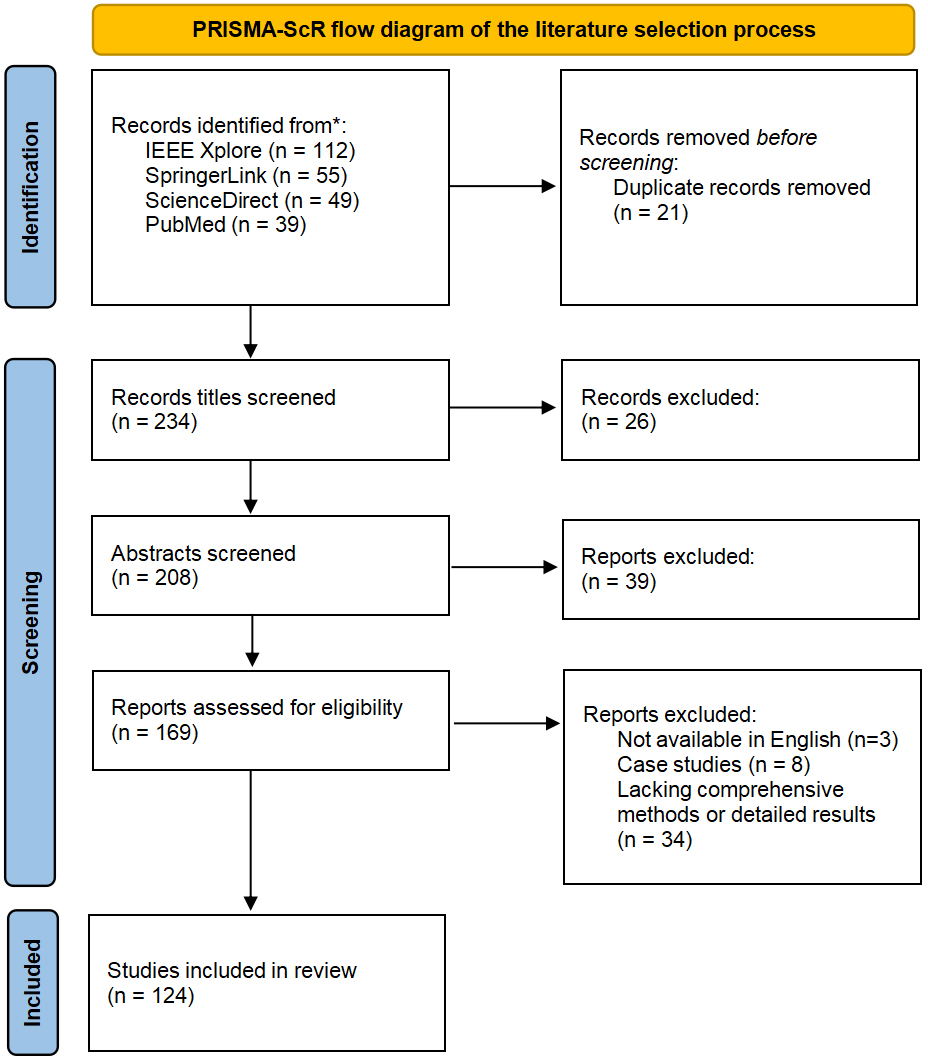

This review adopts a scoping review methodology to systematically map and synthesize existing literature on speech-based AI for disease analysis. The methodological framework of this review was informed by the Preferred Reporting Items for Systematic Reviews and Meta-Analyses Extension for Scoping Reviews (PRISMA-ScR)[27], to enhance transparency and reproducibility of the literature mapping process. For more information, please refer to Figure 2.

Figure 2. PRISMA-ScR flow diagram of the literature identification and selection process. PRISMA-ScR: Preferred Reporting Items for Systematic Reviews and Meta-Analyses Extension for Scoping Reviews.

Information sources and search strategy

Based on the venues represented in the final reference list, the literature search covered both biomedical and engineering-oriented databases, including PubMed, Web of Science, IEEE Xplore, ScienceDirect, and Scopus. The search covered studies published between January 2015 and December 2025. Only articles published in English were considered.

The search strategy was structured around four complementary conceptual dimensions: speech-related data modalities, clinical disease contexts, clinical tasks, and AI methodologies. Speech-related terms included speech, voice, audio, and acoustics. Disease-related terms encompassed both general descriptors such as disease and disorder and higher-level disease categories (e.g., neurological, psychiatric, and respiratory disorders). Task-related terms focused on clinically relevant objectives, including diagnosis, assessment, severity grading, prediction, and screening. Methodological terms included ML, DL, AI, neural networks, foundation models, and large models (LMs).

These concepts were combined using Boolean operators, with database-specific adaptations applied where necessary. A representative search query followed the structure:

(“speech” OR “voice” OR “audio”) AND (“disease” OR “disorder” OR “clinical” OR “medical”) AND (“diagnosis” OR “assessment” OR “severity” OR “prediction” OR “screening”) AND (“ML” OR “DL” OR “AI” OR “LM”)

Study selection and eligibility criteria

All retrieved records were imported into a reference management system, and duplicate entries were removed. Two independent reviewers screened titles and abstracts to identify potentially eligible studies, followed by full-text assessment of selected articles. Disagreements were resolved first through consensus or by consultation with another author.

Studies were included if they utilized human speech or voice data as a primary modality, addressed clinically meaningful tasks such as disease diagnosis, assessment, severity grading, screening, or prediction, applied AI-based modeling approaches, and reported quantitative experimental results (e.g., accuracy, sensitivity, specificity, or area under the curve). Both traditional ML, DL, and foundation-model-based approaches were considered.

Studies were excluded if they were limited to abstracts, reviews, editorials, or commentaries; focused exclusively on generic speech tasks (e.g., speech recognition) without disease relevance; relied solely on speech-to-text semantic analysis without acoustic modeling; or failed to report methodological details and experimental performance.

Data extraction and study categorization

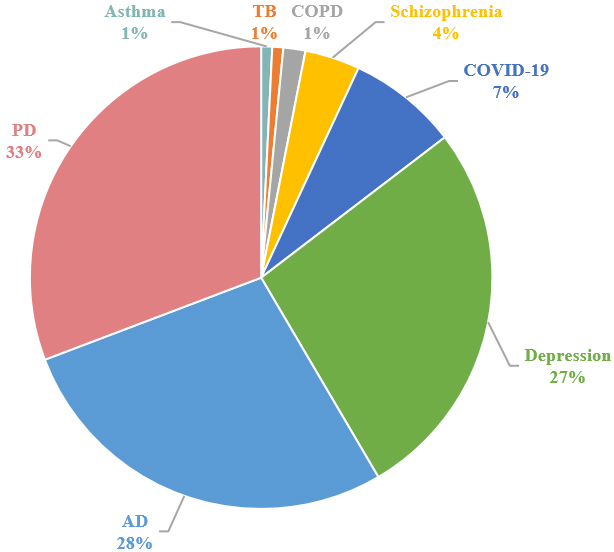

For each included study, information regarding disease category, clinical task, speech modality, modeling methodology, dataset characteristics, and evaluation metrics was extracted. To facilitate systematic synthesis, studies were categorized along three primary dimensions: disease domain, clinical task, and AI methodology. This structured organization enabled comparative analysis across diseases and modeling paradigms, supporting a comprehensive assessment of methodological trends and clinical applicability. The distribution of papers per disease task is shown in Figure 3.

SPEECH DATA

As an important digital biomarker of health, speech data has the characteristics of non-invasiveness, ease of acquisition, and convenient collection. Data collection plays a crucial role in the development of ML models for disease analysis and is the cornerstone of building and training these models. In this section, we will introduce several mainstream speech datasets. For more detailed information about the datasets, please consult Table 2.

The summary of speech datasets

| Dataset | Year | Disease | Disease category | Access link |

| Oxford | 2008 | PD | PD:23, HC:6 | https://www.kaggle.com/datasets/thecansin/parkinsons-data-set |

| NeuroVoz | 2024 | PD | PD:54, HC:58 | https://zenodo.org/doi/10.5281/zenodo.10777656 |

| MDVR-KCL | 2017 | PD | PD:16, HC:21 | https://zenodo.org/records/2867216 |

| DementiaBank | 2005 | AD | AD:117, HC:93 | https://tensorflow.google.cn/datasets/catalog/dementiabank |

| EWA-DB | 2017 | PD&AD | AD:87, PD:175, MCI:62, AD&PD:2, HC:1323 | https://zenodo.org/records/10952480 |

| iFLYTEK | 2019 | AD | AD:84, MCI:179, HC:138 | https://challenge.xfyun.cn/2019/gamedetail?blockId=978 |

| DAIC-WOZ | 2014 | Depression | Depression:56, HC:133 | https://dcapswoz.ict.usc.edu/ |

| MODMA | 2022 | Depression | Depression:23, HC:29 | https://modma.lzu.edu.cn/data/index/ |

| The SCZ dataset | 2022 | SCZ | SCZ:34, HC:38 | ____________________ |

| Coswara | 2023 | COVID-19 | COVID-19:674, HC:1819, and 142 recovered subjects | https://coswara.iisc.ac.in/ |

| COUGHVID | 2021 | COVID-19 | COVID-19 over 25,000 recordings (1,155 samples tested positive) | https://opendatalab.org.cn/OpenDataLab/COUGHVID |

| COVID-19 Sounds | 2021 | COVID-19 | COVID-19 36,116 participants (2,106 samples tested positive) | https://openreview.net/forum?id=9KArJb4r5ZQ |

| CWLSD | 2021 | COPD | COPD:77, HC:35 | https://data.mendeley.com/datasets/jwyy9np4gv/3 |

PD. The Oxford Parkinson’s Disease Detection Dataset was created by Little et al.[28] in 2008. By asking subjects to continuously pronounce specific vowel sounds and using the Multi-Dimensional Voice Program (MDVP) and advanced mathematical analysis methods, 22 acoustic features were extracted to distinguish between control participants and PD patients. The creation of this dataset is of great significance for the remote monitoring and early diagnosis of PD. NeuroVoz was jointly recorded by the Bioengineering and Optoelectronics (ByO) group from Universidad Politécnica de Madrid (UPM) and the Otorhinolaryngology and Neurology Services of Hospital General Universitario Gregorio Marañón (HGUGM) and Hospital Universitario de Fuenlabrada (HUF), Madrid, Spain[29]. It provides rich resources for scientific research on the impact of PD on speech and is currently the most complete public speech corpus for PD. The Mobile Device Voice Recordings at King’s College London (MDVR-KCL) dataset was collected by King’s College London (KCL) Hospital in 2017 using smartphones to conduct voice calls with subjects, and all calls were made in a quiet indoor environment[30].

AD. DementiaBank was created by Boller and Becker in 2005. DementiaBank is a shared database of multimedia interactions for the study of communication in dementia[31]. The dataset contains 117 people diagnosed with AD and 93 participants from a control group reading a description of an image. Early Warning of Alzheimer speech database (EWA-DB) is a speech database that contains data from 3 clinical groups: AD, PD, mild cognitive impairment (MCI), as well as a control group of cognitively unimpaired participants[32]. iFLYTEK is a Chinese dataset created in 2019. It contains the speech and text data of 138 control participants, 179 people with MCI, and 84 AD patients. These datasets provide researchers with comprehensive data support for in-depth exploration of speech features during the progression of AD, significantly advancing the application of speech analysis in AD diagnostic research.

Depression. The Distress Analysis Interview Corpus-Wizard of Oz (DAIC-WOZ) database is part of the Distress Analysis Interview Corpus (DAIC)[33]. This corpus mainly contains clinical interview records and aims to support the diagnosis of psychological distress conditions such as anxiety, depression, and post-traumatic stress disorder. The dataset contains 189 interview data, with an average interview duration of 16 minutes. Each interview includes the transcribed text of the interview, the audio file of the participant, and facial feature information. This dataset is commonly used in text-based detection, speech-based detection, and multimodal architecture research. The Multi-modal Open Dataset for Mental-disorder Analysis (MODMA) dataset is a multi-modal open dataset for mental disorder analysis, released and continuously updated by the Key Laboratory of Wearable Devices of Gansu Province, Lanzhou University[34]. It contains data of clinical depression patients and control participants.

Schizophrenia (SCZ). The Schizophrenia dataset (also named SCZ dataset) is recruited by the Psychiatry Department of the Mental Health Center, Sichuan University[35]. It comprises 34 first-episode drug-naive patients with SCZ and 38 participants from a control group. All participants are asked to read text with neutral, positive, and negative sentiments. All recordings were made in 16-bit format using SONY ICD-TX650, and the sampling frequency is 44.1 kHz. Specifically, SCZ dataset comprises 720 utterances (340 schizophrenic patients and 380 control participants) with a neutral sentiment, 569 utterances (271 schizophrenic patients and 298 control participants) with a positive sentiment (emotional state of happiness), and 216 utterances (102 schizophrenic patients and 114 control participants) with a negative sentiment (emotional state of anger).

Coronavirus disease 2019. Coswara is one of the most widely used datasets for sound-based coronavirus disease 2019 (COVID-19) detection[36]. Its sound samples were crowdsourced globally via a web application, recorded and stored at a sampling frequency of 16kHz, comprising over 140,000 audio files. This dataset contains a diverse set of respiratory sounds and rich metadata, recorded between April 2020 and February 2022 from 2,635 individuals [1,819 SARS-CoV-2 (severe acute respiratory syndrome coronavirus 2) negative, 674 positive, and 142 recovered subjects]. The respiratory sounds encompass nine categories associated with variations of breathing, cough, and speech. The rich metadata includes demographic information such as age, gender, and geographic location, as well as health information relating to symptoms, pre-existing respiratory ailments, comorbidities, and SARS-CoV-2 test status. The COUGHVID crowdsourcing dataset is an extensive, validated, and publicly available dataset of cough recordings[37]. With over 20,000 recordings (including 1,010 self-reported COVID-19 cases) sourced globally. Notably, Experienced pulmonologists labeled more than 2,000 recordings to diagnose medical abnormalities present in the coughs, thereby contributing one of the largest expert-labeled cough datasets in existence that can be used for a plethora of cough audio classification tasks. To the best of our knowledge, COVID-19 Sounds is the largest multimodal COVID-19 respiratory sound dataset: it comprises three modalities, namely breathing, cough, and voice recordings[38]. Crowd-sourced from 36,116 global participants via the COVID-19 Sounds App, the dataset contains 53,449 audio samples (including 2,106 COVID-19 positive samples). As the dataset was crowd-sourced from various platforms, it contains diverse audio file formats (e.g., .ogg, .m4a, .wav, and .webm) and sampling rates (specifically: 2.6% at 8KHz, 0.3% at 12KHz, 50.3% at 16KHz, 36.7% at 44.1KHz, and 10.1% at 48KHz).

COPD. The Chest Wall Lung Sound Database (CWLSD)[39] comprises sounds from seven medical conditions (namely asthma, heart failure, pneumonia, bronchitis, pleural effusion, lung fibrosis, and COPD) and normal breathing sounds. This dataset features audio recordings collected from chest wall examinations at multiple vantage points. It includes respiratory sounds from 112 subjects (35 control participants and 77 patients with ailments, among whom 9 are COPD patients).

AI FRAMEWORK

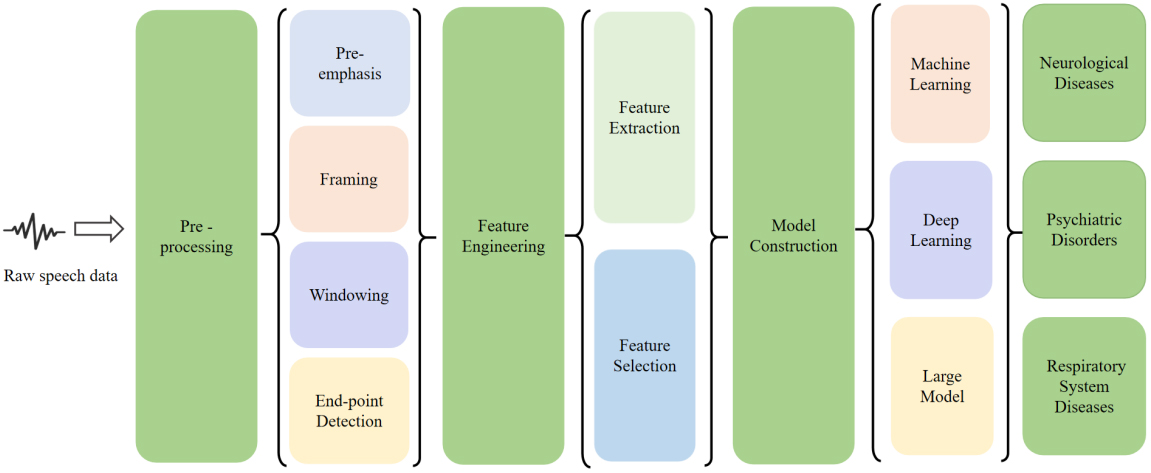

In this section, we introduce the framework for speech-based disease analysis (see Figure 4), including main steps such as pre-processing, feature engineering, and model construction. Firstly, pre-processing the original speech data eliminates irrelevant information such as noise and interference. Then, speech feature parameters that can reflect the characteristics of diseases are extracted from the pre-processed speech data, converting the original speech signal into a representative and discriminative feature vector. Next, feature selection is used to remove redundant and irrelevant features, selecting the most valuable and discriminative feature subset from the numerous extracted features. Finally, a model is constructed to leverage computational power and ML to automatically identify the relationships between speech features and diseases, enabling the assessment, prediction, and diagnosis of new speech data.

Pre-processing

In the reviewed literature, speech pre-processing served as a task-driven step designed to enhance signal quality and adapt raw recordings to disease-specific diagnostic scenarios. Most studies applied a standardized preprocessing pipeline tailored to clinical speech tasks such as sustained phonation, reading passages, or spontaneous speech.

Pre-emphasis was widely used to amplify high-frequency components that are typically attenuated during speech production and recording. In PD studies relying on cepstral and linear predictive features, pre-emphasis was applied prior to Mel-Frequency Cepstral Coefficients (MFCC) or Linear Predictive Coding (LPC) extraction to better capture articulatory imprecision and phonatory instability associated with hypokinetic dysarthria[4,13,40]. Similar preprocessing strategies were also reported in early AD speech analysis to enhance subtle spectral changes related to cognitive decline[41,42].

Framing and windowing were universally adopted to exploit the short-term quasi-stationary nature of speech signals. Across neurological and psychiatric disorder studies, speech signals were typically segmented into frames of 20-40 ms with partial overlap, and Hamming windows were the most frequently employed windowing function[16,43,44]. This configuration ensured stable short-term spectral representations and reduced spectral artifacts caused by abrupt signal truncation.

Endpoint detection was primarily used to isolate effective speech segments and exclude silence or low-energy regions. This step was particularly relevant in PD and dysarthria studies using sustained vowel phonation, where accurate isolation of voiced segments improved robustness against background noise and recording variability[45,46]. In spontaneous speech tasks for AD and depression detection, endpoint detection also helped remove non-informative pauses and recording artifacts[6,7].

Overall, preprocessing in the reviewed studies was closely aligned with the characteristics of the speech task and the targeted disease, rather than being treated as a purely technical routine.

Feature engineering

Feature engineering serves as a critical link between raw speech signals and downstream diagnostic models. In the reviewed studies, this process generally comprised two stages: feature extraction, which transforms speech signals into acoustic representations, and feature selection, which aims to retain disease-relevant features while reducing redundancy and dimensionality. The choice of these strategies was closely influenced by disease characteristics, speech tasks, and dataset scale.

Feature extraction. Feature engineering remained a central component in speech-based disease analysis. The reviewed studies consistently employed combinations of prosodic, spectral, and voice quality-related features, with the choice of features reflecting both disease pathology and data availability.

Prosodic features, including fundamental frequency, speaking rate, pause duration, and intensity-related measures, were widely used to characterize temporal and rhythmic abnormalities in speech. These features were particularly prevalent in studies on PD, depression, and SCZ, where altered speech rhythm and prosody are clinically observable symptoms[47-49].

Spectral features constituted the most commonly used feature category across all disease domains. MFCCs, LPC, and log-Mel spectrogram representations were repeatedly adopted in PD and AD studies due to their effectiveness in encoding vocal tract characteristics and phonatory patterns[50]. In several works, spectral features formed the primary input for both traditional ML and DL models.

Voice quality-related features, such as jitter, shimmer, and harmonics-to-noise ratio (HNR), were frequently employed to capture micro-instabilities in vocal fold vibration. These features were especially prominent in PD and voice disorder studies, reflecting impairments in phonatory control and vocal stability[40,45,46]. Many studies reported improved diagnostic performance when voice quality features were combined with spectral representations.

Feature selection. Given the limited sample sizes typical of clinical speech datasets, feature selection was widely applied to reduce dimensionality and mitigate overfitting. Most reviewed studies favored statistically motivated filter methods or embedded feature selection strategies integrated within classifiers, such as L1-regularized models and tree-based approaches[51,52]. Wrapper-based methods were less frequently adopted due to their high computational cost and sensitivity to small datasets.

Rather than emphasizing methodological taxonomy, feature selection in the reviewed literature primarily aimed to identify disease-sensitive features and improve model generalizability. Representative feature selection strategies are summarized in Table 3.

The classification of feature selection methods

| Category | Definition | Common methods |

| Filter | Evaluate the importance of features by assessing the statistical properties of features (such as variance, correlation, mutual information, etc.), and select features accordingly | Chi-square test, mutual information, Pearson correlation coefficient, etc. |

| Wrapper | Regard feature selection as an optimization problem, and find the optimal feature combination by searching different feature subsets | Recursive Feature Elimination (RFE), Forward Selection, etc. |

| Embedded | Embedded feature selection incorporates feature selection into the model training process. By evaluating and adjusting the importance of features during the model training process, the optimal feature subset is selected | Lasso regression, decision trees, etc. |

Model construction

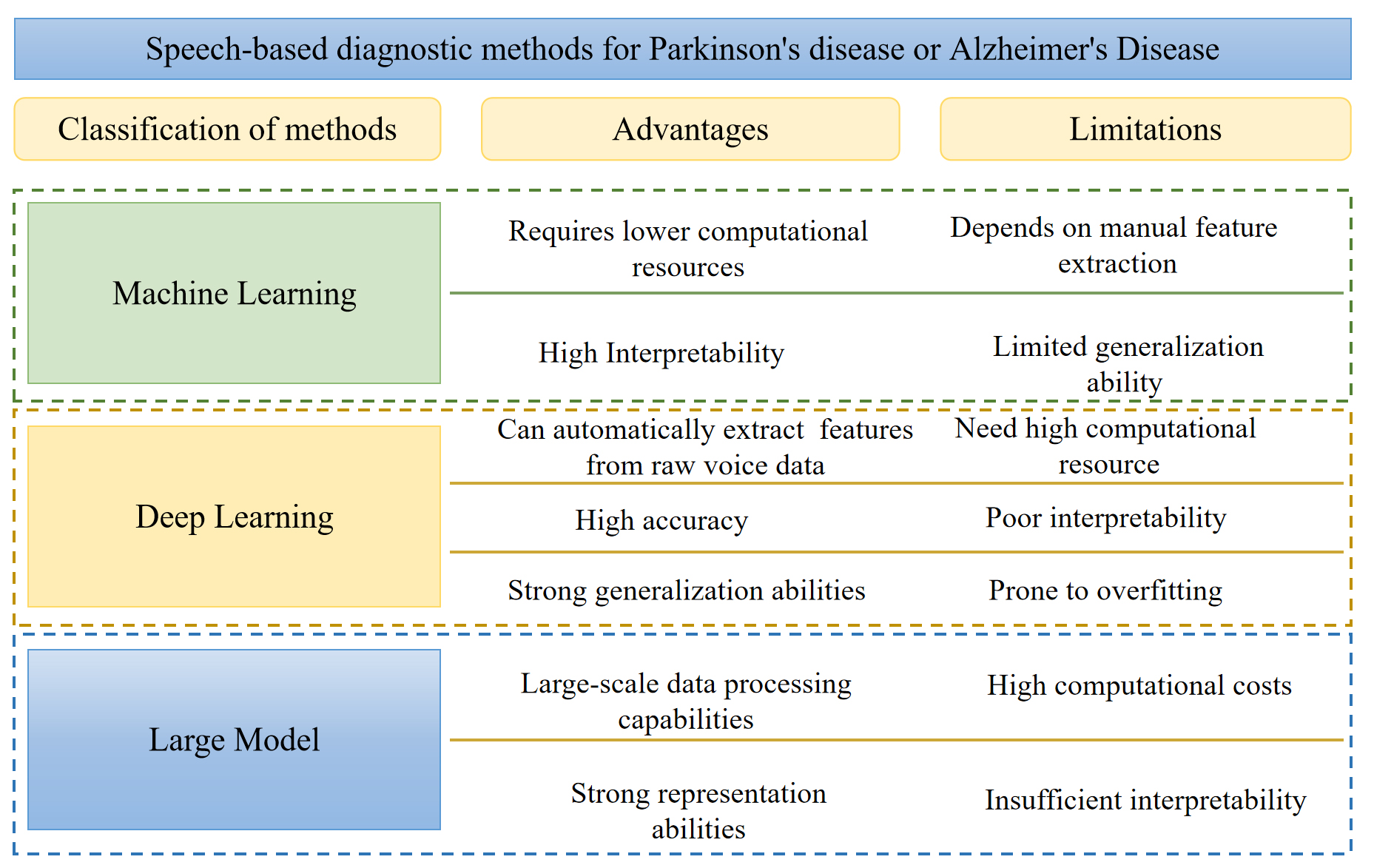

In the process of using speech data for disease diagnosis, model construction is the core link. It determines how to extract key features related to diseases from complex speech signals and make accurate predictions or diagnoses based on them. Currently, the construction methods of AI-assisted diagnosis models can be mainly divided into three categories: traditional ML, DL and LMs. For the specific comparison of the advantages and disadvantages of these three methods, please refer to Figure 5.

Machine learning. Traditional ML methods remain widely adopted in speech-based disease diagnosis, particularly in studies relying on handcrafted acoustic features and limited clinical datasets. Algorithms such as support vector machines (SVMs) and random forests (RFs) are frequently used due to their relatively low data requirements and stable performance in small-sample scenarios. From a methodological perspective, ML models operate on explicitly defined feature spaces, allowing them to exploit disease-sensitive acoustic descriptors such as prosodic irregularities or voice quality perturbations. This property makes ML approaches particularly suitable for clinical studies where interpretability and feature-level analysis are important. However, their reliance on predefined features also constrains their ability to capture complex temporal dependencies and subtle nonlinear patterns in speech, which may limit performance in more heterogeneous disease populations.

Deep learning. With the advancement of DL techniques, CNNs have been widely applied to disease-related speech analysis. Recurrent neural networks (RNNs)[53], including long short-term memory (LSTM)[54] networks and gated recurrent units (GRUs)[55], as well as Transformer-based models, have also been increasingly explored in this domain. Unlike traditional ML approaches, DL models can learn hierarchical representations directly from time-frequency features, such as spectrograms, thereby reducing dependence on manual feature design. CNN-based architectures are particularly effective in modeling local spectral patterns, while RNNs and Transformers are better suited for capturing temporal dynamics and long-range dependencies in speech signals. These capabilities are advantageous for diseases characterized by progressive or task-dependent speech impairments. Nevertheless, the reviewed studies indicate that DL-based models typically require larger datasets and careful regularization to avoid overfitting, which remains a practical limitation in many clinical speech datasets.

Large models. LMs, including foundation models pretrained on massive speech or multimodal corpora, represent an emerging paradigm in speech analysis. Models such as Qwen-Audio[56], LlaMA2[57] and Viola[58] have demonstrated strong general-purpose representation learning capabilities. In theory, such models offer the potential to capture complex and subtle speech patterns that may be difficult to learn from limited clinical data alone.

However, based on the reviewed literature, the application of LMs to disease-specific speech diagnosis remains largely exploratory. Most existing studies focus on feasibility analysis, transfer learning, or hypothetical clinical potential rather than validated diagnostic deployment. Challenges such as domain mismatch, limited labeled medical speech data, and concerns regarding interpretability currently restrict their widespread clinical adoption. As a result, LMs should be regarded as a promising future direction rather than a mature solution for speech-based disease diagnosis.

Overall, the reviewed studies suggest that no single modeling paradigm is universally optimal for speech-based disease diagnosis. Traditional ML methods remain effective in small-scale, feature-driven clinical settings, while DL approaches offer improved representation capacity at the cost of higher data demands. LMs introduce new opportunities for scalable and transferable speech modeling, but their clinical readiness has yet to be fully demonstrated. The choice of modeling strategy therefore depends on disease characteristics, dataset scale, and the balance between diagnostic performance and interpretability.

NEUROLOGICAL DISORDERS

Neurological disorders are the leading cause of the global disease burden, significantly affecting both the central and peripheral nervous systems[59]. In 2021, neurological disorders affected approximately 3.4 billion people worldwide, accounting for 43% of the global population[60]. The research on the application of speech processing technology in the diagnosis of neurological disorders, particularly in the context of PD and AD, has witnessed significant advancements in recent times. In this section, we will comprehensively and elaborately review the relevant research achievements of speech in the diagnosis of these two neurological disorders. The summary of research literature is shown in Table 4.

The Summary of applications of speech analysis in neurological disorders

| Reference | Disease | Task | Method | Modality | Performance |

| Upadhya et al.[40] | PD | Classification | NN | Speech | Accuracy: 98% Specificity: 96.6% Sensitivity: 99.4% |

| Haider et al.[42] | AD | Detection | DT | Speech | Accuracy: 78.7% |

| Faragó et al.[44] | PD | Identification in noise | CNN | Speech | Accuracy: 96% |

| Bayesiehtashk et al.[46] | PD | Severity Assessment | Ridge regression | Speech | - |

| Hason et al.[50] | AD | Prediction and stages classification | RF | Speech | Accuracy: 82.2% Precision: 81.6% Recall: 81.4% AUC: 89.3% |

| Karan[51] | PD | Prediction | XGBoost | Speech | Accuracy: 95.07% AUC: 96% Specificity: 89.57% Sensitivity: 95.07% |

| Tunc et al.[52] | PD | Severity Estimation | XGBoost | Speech | MAE: 7.13±1.07 |

| Moro-Velazquez et al.[61] | PD | Detection | GMM-UBM classifiers | Speech | Accuracy: 75%-82% AUC: 84%-95% |

| Shastry[62] | PD | Prediction | KNN + GB | Speech | Accuracy: 75.48% Precision: 75.63% Recall: 74.92% F1: 75.06% AUC: 74.92% |

| Mohammadi et al.[63] | PD | Diagnosis | LR | Speech | Accuracy: 97.22% |

| Govindu et al.[64] | PD | Early Detection | RF | Speech | Accuracy: 91.83% Sensitivity: 95% |

| Mahesh et al.[65] | PD | Prediction | XGBoost-RF | Speech | Accuracy: 98% Precision: 97.24% F1: 97.4% Specificity: 97% Sensitivity: 97.56% |

| Al Mudawi et al.[66] | PD | Detection | LightGBM | Speech | Accuracy: 98.3051% |

| Jain et al.[67] | PD | Detection | KNN | Speech | Accuracy: 97.33% Precision: 96.2963% Recall: 1% F1: 98.1132% |

| Wang et al.[68] | PD | Diagnosis | EMSFE | Speech | Accuracy: 92.5% Precision: 94.7% Specificity: 95% Sensitivity: 90% |

| Deepa et al.[69] | PD | Detection & Classification | ERT | Speech | Accuracy: 87% |

| Abedinzadeh Torghabeh et al.[70] | PD | Severity Assessment | WMV | Speech | Accuracy: 98.62% Precision: 98.62% F1: 98.62% Specificity: 99.54% Sensitivity: 98.62% |

| Laudis et al.[71] | PD | Classification | SVM | Speech | Accuracy: 92.5% Precision: 91% Recall: 94% F1: 92.5% AUC: 93% Specificity: 93% Accuracy: 91% |

| Yuan et al.[72] | PD | Prediction | DNN | Speech | Accuracy: 95% F1: 97% |

| Wrobel et al.[73] | PD | Diagnosis | MLP | Speech | Accuracy: 90.6% |

| Liu et al.[74] | PD | Prediction | ANN | Speech | Precision: 96% F1: 98% AUC: 91% Specificity: 82% Sensitivity: 99% |

| Quan et al.[75] | PD | Detection | CNN | Speech | Accuracy: 92% |

| Tayebi Arasich et al.[76] | PD | Detection | wav2vec 2.0 | Speech | Accuracy: 83.2% AUC: 85% Specificity: 89.2% Sensitivity: 90.8% |

| Wang et al.[77] | PD | Recognition | SVM | Speech | Accuracy: 87.5% Specificity: 86.11% Sensitivity: 88.89% |

| Xu et al.[78] | PD | Diagnosis | DNN | Speech | Accuracy: 89.5% |

| Pandey et al.[79] | PD | Prediction | CNN-LSTM | Speech | Accuracy: 97% |

| Mishra et al.[80] | PD | Severity Assessment | DNN | Speech | Accuracy: 96.2% Specificity: 96.15% Sensitivity: 94.15% |

| Jeancoias et al.[81] | PD | Early Detection | DNN + X-vectors | Speech | |

| Chronowski et al.[82] | PD | Diagnosis | wav2vec 2.0 | Speech | Accuracy: 97.92% AUC: 99% |

| Khaskhoussy et al.[83] | PD | Diagnosis | MLP | Speech | Accuracy: 95.52% |

| Hireš et al.[84] | PD | Detection | CNN | Speech | Accuracy: 99% AUC: 89.6% Specificity: 93.3% Sensitivity: 86.2% |

| Akila et al.[85] | PD | Classification | CNN | Speech | Accuracy: 99.1% Precision: 97.8% Recall: 94.7% F1: 99.5% |

| Palakayala et al.[86] | PD | Detection | DCNN + KNN | Speech | Accuracy: 93.7% |

| Skibińska et al.[87] | PD | Diagnosis | XGBoost[177] | audio and video | Accuracy: 83% Specificity: 78% Sensitivity: 88% |

| Khan Tusar et al.[88] | PD | Early Diagnosis | GB/AdaBoost | Speech | Accuracy: 92% F1: 92% AUC: 81% |

| Yousif et al.[89] | PD | Early Diagnosis | KNN+SVM | Images and Speech | Accuracy: 99.94% |

| Pappagari et al.[93] | Assessment and detection | X-vectors, BERT | Speech | Precision: 70% Recall: 88% F1: 78% | |

| Khodabakhsh et al.[95] | AD | Detection | SVM | Speech | Accuracy: 83.5% |

| König et al.[96] | AD | Assessment and detection of early stage dementia and MCI | ML | Speech | Accuracy: 92% |

| Li et al.[97] | AD | dementia Detection | LR | Speech | Accuracy: 77% |

| Ben Ammar et al.[98] | AD | Early Detection | SVM | Speech | Accuracy: 91(± 0.5)% Recall: 91(± 0.5)% |

| Nasrolahzadeh et al.[99] | AD | Diagnosis | GP | Speech | Accuracy: 99.09% |

| König[100] | AD | MCI/AD Classification | SVM | Speech | Accuracy: 79% ± 5%(MCI vs. HC) 87% ± 3%(AD vs. HC) 80% ± 5%(MCI vs. AD) |

| López-de-Ipiña et al.[101] | AD | Diagnosis | SVM | Speech | Accuracy: 93.79% |

| García-Gutiérrez et al.[102] | AD | SCD/MCI/ADD Identification | ML | Speech | F1: 92 |

| Kim et al.[103] | AD | Classification | Speech | Accuracy: 78% Precision: 82% Recall: 76% F1: 79% | |

| Chien et al.[104] | AD | Assessment | RNN | Speech | Accuracy: 83.8% AUC: 83.8 ± 3% Specificity: 76.4 ± 6% Sensitivity: 75.6 ± 7% |

| Roshanzamir et al.[105] | AD | Risk Assessment | Transformer+LR | Speech | Accuracy: 88.08% Precision: 90.57% Recall: 84.34% F1: 87.23% |

| Dong et al.[106] | AD | Detection | HAFFormer | Speech | Accuracy: 82.6% F1: 82.6% |

| Liu et al.[107] | AD | Detection | Transformer | Speech | Accuracy: 93.5% Precision: 94% Recall: 89% F1: 91.19% |

| Liu et al.[108] | AD | Detection | CNN + BiLSTM | Speech | Accuracy: 82.59% Precision: 85.24% Recall: 81.48% F1: 82.94% |

| Ahn et al.[109] | AD | Early Detection | Densenet121 | Speech | Accuracy: 90% F1: 91.39% AUC: 92.43% Specificity: 83.33% Sensitivity: 95.5% |

| Farazi et al.[110] | AD | Detection | CNN | Speech | Accuracy: 85% |

| Mittal et al.[111] | AD | AD detection | BERT + CNN | Speech and Text | Accuracy: 85.3% |

| Haulcy et al.[112] | AD | Classification | BERT + SVM/RF | Audio and Text | Accuracy: 85.4% F1: 84.4% Specificity: 82.3% Sensitivity: 89.2% |

| Li et al.[113] | AD | Prediction | BERT | Audio and Text | Accuracy: 83.69% |

| Jang et al.[114] | AD | Classification | LR | Speech and Video | Accuracy: 83 ± 1% |

| Ablimit et al.[115] | AD | Detection | CNN-GRU | Speech | Recall: 70.6%UAR |

| Martinc et al.[116] | AD | Diagnosis | BERT | Audio and Text | Accuracy: 93.75% |

| Mahajan et al.[117] | AD | Detection | CNN-LSTM | Speech | Accuracy: 72.92% Precision: 78.94% Recall: 62.5% F1: 69.76% |

| Zhang et al.[118] | AD | Detection | wav2vec 2.0 | Speech | Accuracy: 85.45% |

| Li et al.[119] | AD | Classification | Whisper | Speech | Accuracy: 84.51% Precision: 83.33% Recall: 85.71% F1: 84.5% |

| Bang et al.[120] | AD | Prediction | ChatGPT | Speech, Text, and Opinions | Accuracy: 87.3% Precision: 88.06% Recall: 87.32% F1: 87.25% Specificity: 94.44% |

| Cui et al.[121] | AD | Detection | WavLM + BERT | Speech and Text | F1: 92.8% |

PD

PD is a progressive neurodegenerative disorder characterized by motor and non-motor impairments. Speech disorders are among the most prevalent symptoms, affecting approximately 70%-90% of patients and substantially reducing quality of life[45]. Compared with the control group, individuals with PD exhibit consistent abnormalities across multiple acoustic dimensions, including phonation stability, articulation, and prosody[43]. These speech alterations have motivated extensive research into speech-based PD diagnosis, positioning speech as a non-invasive and cost-effective digital biomarker.

Early studies focused on identifying discriminative acoustic markers between PD patients and control groups. For example, Wang et al.[49] reported systematically lower formant frequencies in PD patients across multiple syllable phonation tasks, suggesting impaired articulatory control. Moro-Velazquez et al.[61] further demonstrated that specific phoneme groups - particularly plosives, vowels, and fricatives - carry higher diagnostic relevance for PD detection across multiple speech corpora. Similarly, Upadhya et al.[40] showed that articulatory features, either alone or combined with cepstral features, provide strong discriminative power, highlighting the importance of motor speech dysfunction in PD-related vocal impairment.

Traditional ML models with handcrafted features

Traditional ML approaches constitute the earliest and most extensively studied paradigm for speech-based PD diagnosis. These methods typically rely on handcrafted acoustic features, such as fundamental frequency (F0), jitter, shimmer, HNR, formant-related measures, and MFCCs, combined with classical classifiers including SVM, RF, KNN, Logistic Regression (LR), and gradient boosting machines (GBMs)[62,63].

Across multiple studies, ensemble-based and tree-based models frequently demonstrate strong diagnostic performance. Govindu et al.[64] and Mahesh et al.[65] reported that RF-based or boosting-based classifiers outperform other ML models when trained on perturbation-related vocal features. Similar trends were observed in comparative studies by Al Mudawi et al.[66] and Jain et al.[67], where Light Gradient Boosting Machine (LightGBM) and KNN achieved high classification accuracy. Wang et al.[68] designed a novel ensemble learning algorithm (EMSFE) based on a Sparse Fusion Ensemble Learning Mechanism (SFELM). Experimental results showed that the proposed algorithm achieved an accuracy of 93.75%. These findings suggest that non-linear ensemble learners are particularly well-suited for capturing subtle interactions among handcrafted speech features.

Beyond binary diagnosis, several studies explored severity estimation and continuous monitoring[69]. Abedinzadeh Torghabeh et al.[70] demonstrated that optimized ML classifiers could robustly stratify PD severity levels, while Bayesiehtashk et al.[46] and Tunc et al.[52] showed that speech-derived features correlate with clinical severity scores across multiple speech tasks. These results support the feasibility of speech-based models for longitudinal PD monitoring rather than solely binary classification.

Feature selection plays a crucial role in improving model efficiency and robustness. Laudis et al.[71] showed that reducing MFCC dimensionality via feature selection not only improved classification accuracy but also reduced computational cost. Similar observations were reported by Yuan et al.[72] and Karan[51], indicating that a compact subset of well-chosen acoustic features often outperforms high-dimensional representations[73].

Overall, handcrafted-feature-based ML models consistently achieve strong performance on benchmark datasets and offer relatively high interpretability. However, many studies rely on small or single-center datasets, raising concerns about overfitting and limited generalizability to real-world clinical populations.

DL models

DL approaches have increasingly been adopted to overcome the limitations of manual feature engineering by enabling automatic representation learning from raw or minimally processed speech signals[74]. CNNs, RNNs, and Transformer-based architectures have been explored across various speech tasks.

Quan et al.[75] proposed an end-to-end DL framework combining temporal modeling with spectrogram-based representations, demonstrating improved performance over expert-crafted features on both sustained vowel and reading tasks. Tayebi Arasich et al.[76] extended DL-based PD diagnosis to a federated learning (FL) setting, showing that privacy-preserving collaboration across multilingual datasets can outperform monolingual models without data sharing, addressing a key barrier to multi-center clinical adoption.

Several studies focused on improving data efficiency and robustness. Wang et al.[77] introduced a deep speech sample learning strategy to reconstruct high-quality prototype samples, while Xu et al.[78] employed a Deep Neural Network (DNN) framework using voiceprint features to enhance PD discrimination. Hybrid DL architectures, such as CNN-LSTM combinations, were explored by Pandey et al.[79], aiming to capture both spectral patterns and temporal dynamics. These approaches generally outperform traditional ML baselines, particularly in continuous or spontaneous speech scenarios.

Noise robustness and real-world applicability were addressed by Faragó et al.[44], who demonstrated effective PD detection from noisy continuous speech, and Mishra et al.[80], who implemented a cloud-based home monitoring framework. Early-stage detection was further explored by Jeancoias et al.[81], showing that speaker embedding techniques such as X-vectors are particularly effective for detecting early PD, especially in text-independent settings.

Recent studies have leveraged transfer learning from large-scale speech models. Chronowski et al.[82] utilized wav2vec 2.0 representations for PD classification, achieving strong performance under cross-validation. Other works explored autoencoders and optimized CNN architectures to further push diagnostic accuracy[83-86].

Overall, DL-based methods demonstrate strong potential for learning disease-relevant speech representations and generalizing across tasks and languages. Nevertheless, many reported results are obtained under controlled experimental settings, and their robustness across heterogeneous clinical populations remains to be fully validated.

Multimodal fusion

In addition to speech-only analysis, several studies investigated multimodal fusion strategies to enhance diagnostic robustness. Skibińska et al.[87] combined audio and video data, while Khan Tusar et al.[88] integrated vocal features with clinical and motor examination data. Yousif et al.[89] further explored fusion of speech and handwriting modalities, reporting very high diagnostic accuracy under experimental conditions.

While multimodal fusion consistently improves classification performance, many of these studies rely on limited datasets and task-specific protocols. Consequently, although multimodal approaches are promising, their clinical applicability requires standardized acquisition protocols, larger cohorts, and prospective validation before deployment in routine clinical practice.

Methodological synthesis for PD

From a clinical perspective, many discriminative speech features identified across studies align with known PD pathophysiology. Perturbation-based features (e.g., jitter and shimmer) reflect impaired phonatory control and hypophonia, while articulation-related features and vowel formants correspond to motor speech dysfunction and dysarthria. Temporal speech abnormalities further mirror bradykinesia and reduced motor coordination.

However, a recurring concern is the prevalence of extremely high reported accuracies, often exceeding 95%. Such results are frequently derived from small datasets, repeated use of benchmark corpora, or cross-validation without external validation, which may overestimate real-world performance. Speech-based PD diagnosis should therefore be viewed as a complementary tool to established clinical assessments, such as the Unified Parkinson’s Disease Rating Scale (UPDRS), rather than a standalone diagnostic replacement.

AD

Language impairment is one of the most prominent and early manifestations of AD, reflecting progressive cognitive decline across multiple domains, including memory, attention, and executive function[90,91]. With advances in speech processing and AI, speech has emerged as a promising non-invasive biomarker for AD screening and monitoring. Numerous studies have demonstrated that acoustic and linguistic characteristics extracted from spontaneous and task-based speech can effectively capture disease-related cognitive impairment[41,92-94].

Traditional ML models with handcrafted features

Early research on speech-based AD diagnosis primarily relied on handcrafted acoustic and linguistic features combined with classical ML classifiers. Commonly used feature sets include prosodic measures, MFCCs, Computational Paralinguistics ChallengE (ComParE), extended Geneva Minimalistic Acoustic Parameter Set (eGeMAPS), and linguistic indicators derived from transcripts, analyzed using LR, RF, and SVM classifiers.

Several studies focused on identifying core speech biomarkers associated with AD. Khodabakhsh et al.[95] showed that prosodic features extracted from conversational speech significantly outperformed linguistic features in distinguishing AD patients from control groups. Similarly, König et al.[96] demonstrated that fluency-related speech tasks recorded via mobile applications were particularly effective for differentiating AD, MCI, and subjective cognitive impairment, highlighting the feasibility of remote speech-based screening.

Cross-lingual robustness emerged as an important research direction. Li et al.[97] demonstrated that mapping engineered speech features across languages enables AD detection in low-resource settings, while Haider et al.[42] introduced an Active Data Representation framework that improved purely acoustic-based AD recognition. Linguistic feature-based approaches further showed strong discriminative power. Ben Ammar et al.[98] and Nasrolahzadeh et al.[99] demonstrated that language features and higher-order spectral representations can serve as effective biomarkers, although such methods often depend on high-quality transcripts.

Clinical feasibility has been explored in multiple studies, where traditional ML classifiers combined acoustic and linguistic features to analyze spontaneous speech, typically achieving diagnostic accuracies of approximately 80% to over 90% across early detection, staging, and severity assessment tasks[100-102]. These findings suggest the potential of non-invasive, low-cost, and remotely deployable speech-based screening approaches, rather than confirmed large-scale clinical implementation.

From a clinical perspective, traditional ML approaches offer interpretability and modest computational cost. However, their performance is sensitive to feature design and often degrades when applied to spontaneous or noisy speech, limiting generalizability across populations.

Overall, handcrafted-feature-based ML methods provide interpretable insights into speech and language impairment in AD but rely heavily on manual feature engineering and task-specific design.

DL models

DL techniques have increasingly been adopted to automatically learn discriminative representations from speech, reducing reliance on handcrafted features. CNNs, RNNs, and Transformer-based architectures dominate this research stream.

Comparative studies have consistently shown DL methods outperform traditional ML across languages. Kim et al.[103] demonstrated that DL models trained on raw acoustic representations achieved higher accuracy and faster inference than ML models using handcrafted features. Temporal modeling of spontaneous speech was further explored using RNN-based architectures, with Chien et al.[104] reporting improved discrimination through sequence-level representations.

Transformer-based models addressed challenges associated with long and heterogeneous speech sequences. Roshanzamir et al.[105] utilized a Transformer- based DL model (specifically BERT Large combined with a logistic regression classifier) for early AD risk assessment based on speech transcripts from picture description tests, achieving 88.08% classification accuracy. Dong et al.[106] proposed the Hierarchical Attention-Free Transformer (HAFFormer) framework to reduce computational complexity while maintaining performance, and Liu et al.[107] further refined Transformer representations through feature purification mechanisms. These studies highlight the importance of modeling long-range dependencies in spontaneous speech for AD detection.

Other works focused on improving data efficiency and reducing annotation dependency. Liu et al.[108] leveraged automatic speech recognition (ASR)-derived acoustic features to eliminate the need for manual transcription, while Ahn et al.[109] and Farazi et al.[110] demonstrated the effectiveness of CNN-based architectures on MFCC and Mel-spectrogram representations.

Overall, DL-based approaches show strong potential for capturing complex speech patterns associated with cognitive decline. However, many studies still rely on relatively small benchmark datasets, and external validation across diverse clinical cohorts remains limited.

Multimodal fusion

To enhance robustness, several studies integrated speech with complementary modalities, such as text, eye-tracking, or demographic information. Multimodal fusion consistently outperformed unimodal approaches by capturing both acoustic and cognitive-linguistic aspects of AD.

Mittal et al.[111] and Haulcy et al.[112] combined audio and textual representations, demonstrating that speech-text fusion improves classification accuracy over single-modality systems. Li et al.[113] proposed a multi-feature fusion model combining MFCC, audio, and text features, achieving 83.69% accuracy in AD detection. Beyond language, Jang et al.[114] incorporated eye-tracking features, while Ablimit et al.[115] integrated demographic information, improving interpretability and clinical relevance. Similarly, Martinc et al.[116] fused spontaneous speech audio with textual features and achieved high diagnostic accuracy for AD, while Mahajan et al.[117] demonstrated that bimodal deep learning models integrating acoustic and transcript-derived linguistic features consistently improved precision compared with acoustic-only baselines.

Despite promising performance, multimodal approaches often require complex data acquisition protocols and standardized task design. Consequently, their translation into large-scale clinical screening remains challenging.

Foundation and pre-trained models

Recent studies have explored pre-trained foundation models to leverage large-scale speech and language knowledge for AD detection. Zhang et al.[118] demonstrated that wav2vec 2.0 representations significantly improve AD recognition performance. Li et al.[119] further showed that incorporating global semantic context using Whisper-based transcripts enhances diagnostic accuracy.

Large language models have also been investigated. Bang et al.[120] and Cui et al.[121] explored LLM-based and sequential knowledge transfer frameworks, demonstrating improved detection of AD and related affective disorders. These approaches represent a shift toward generalized, adaptable diagnostic frameworks.

Nevertheless, most foundation-model-based studies remain retrospective and experimental. Their clinical readiness requires careful validation, transparency, and assessment of failure modes.

Methodological synthesis for AD

Speech and language impairments in AD reflect underlying cognitive deficits in memory, executive function, and semantic processing. Acoustic degradation, reduced fluency, and linguistic simplification observed across studies align with known neuropathological mechanisms.

However, as with PD, many studies report high diagnostic accuracy under controlled conditions. These results should be interpreted cautiously, as real-world deployment requires robustness to demographic variability, spontaneous speech, and recording conditions. Speech-based AD diagnosis is best positioned as a complementary screening tool rather than a replacement for clinical neuropsychological assessment.

PSYCHIATRIC DISORDERS

Psychiatric disorders have long been a major public health concern and a primary contributor to the global health-related burden. From 1990 to 2021, the global incidence of psychiatric disorders showed a significant upward trend, making timely diagnosis and treatment crucial for improving patients’ quality of life[122]. In this section, we focus on two major conditions, depression and SCZ, to examine the applications of speech analysis in mental health. The summary of research literature is shown in Table 5.

Summary of applications of speech analysis in psychiatric disorders

| Reference | Disease | Task | Method | Modality | Performance |

| Shin et al.[7] | Depression | Detection | MLP | Speech | F1: 58.9% AUC: 65.9% Specificity: 66.2% Sensitivity: 65.6% |

| Dumpala et al.[8] | Depression | Detection and Severity Estimation | CNN/LSTM | Speech | Accuracy: 66% (balanced accuracy) F1: 51% (DAIC-WOZ) |

| Berardi et al.[47] | SCZ and Depression | Classification | SVM | Speech | Accuracy: 94.7% (HC vs SSD) Accuracy: 92% (HC vs MDD) Accuracy: 93.2% (SSD vs MDD) |

| Sahu et al.[48] | Depression | Detection | SVM | Speech | Accuracy: 77.8% |

| Scibelli et al.[123] | Depression | Detection | SVM | Speech | Accuracy: over 75% |

| Jiang et al.[124] | Depression | Detection | LR | Speech | Accuracy: 75% (females) Accuracy: 81.82% (males) Specificity: 70.59% (females) Specificity: 85.29% (males) Sensitivity: 79.25% (females) Sensitivity: 78.13% (males) |

| Xu et al.[125] | SCZ and Depression | Classification and Severity Assessment | Ensemble | Audio and video | Accuracy: 82.3% (SCZ vs. HC) Accuracy: 82.3% (MDD vs. HC) Accuracy: 84.7% (MDD vs. SCZ) |

| Shankayi et al.[126] | Depression | Recognition | SVM | Speech | Accuracy: 92.85% |

| Zulfiker et al.[127] | Depression | Prediction | AdaBoost | Text | Accuracy: 92.56% Precision: 95.77% Recall: 91.89% F1: 93.79% AUC: 96% Specificity: 93.62% Sensitivity: 91.89% |

| König et al.[128] | Depression | Detection | SVM | Speech + Text | Accuracy: 93% |

| Kim et al.[129] | Depression | Prediction | RF | Text | Accuracy: 93.1% AUC: 82.3% Specificity: 99.3% |

| He et al.[130] | Depression | Severity Assessment | DCNN | Speech | - |

| Chlasta et al.[131] | Depression | detection | ResNet-34 | Speech | Accuracy: 77% Precision: 57.14% Recall: 66.67% F1: 61.54% |

| Muzammel et al.[132] | Depression | Diagnosis | CNN | Speech | Accuracy: 86.06% Precision: 81% Recall: 73% F1: 77% AUC: 83% |

| Srimadhur et al.[133] | Depression | Detection and Assessment | CNN | Speech | Accuracy: 74.64% Precision: 79% Recall: 74% F1: 77% |

| Kim et al.[134] | Depression | Classification | CNN | Speech | Accuracy: 78.14% Precision: 76.83% Recall: 77.9% F1: 77.27% AUC: 86% |

| Ishimaru et al.[135] | Depression | Classification and Severity Assessment | GCNN | Audio | Precision: 94.75% |

| Das et al.[136] | Depression | Detection | CNN | Audio | Accuracy: 90.26% (DAIC-WOZ) Accuracy: 90.47% (MODMA) |

| Zhang et al.[137] | Depression | Detection | wav2vec 2.0+1D- CNN + LSTM | Speech | Precision: 84.49% (DAIC-WOZ) Precision: 94.83% (MODMA) F1: 79% (DAIC-WOZ) F1: 90.53% (MODMA) |

| Gupta et al.[138] | Depression | Detection | DAttn Conv 2D LSTM | Speech | Accuracy: 97.82% (DAIC-WOZ) Accuracy: 98.91% (SH2-FS) |

| Lin et al.[139] | Depression | Detection | CNN + Transformer | Speech | Specificity: 80.35% Sensitivity: 82.14% |

| Pandey et al.[140] | Depression | Recognition | TFNN | Speech | - |

| Huang et al.[141] | Depression | Recognition | wav2vec 2.0 + Transformer | Speech | Accuracy: 94.81% |

| Wang et al.[142] | Depression | Detection | HuBERT | Speech | Precision: 70.59% Recall: 85.71% F1: 83.15% |

| Tian et al.[143] | Depression | Recognition | CNN | Speech | Accuracy: 87.5% (females) Accuracy: 87% (males) |

| Harati et al.[144] | Depression | Severity Classification | DNN | Audio | AUC: 80% |

| Liu et al.[146] | Depression | Detection | Resnet X-vectors | Speech | Accuracy: 74.72% F1: 76.9% |

| Ravi et al.[147] | Depression | Detection | CNN-LSTM | Speech | F1: 80% |

| Wang et al.[148] | Depression | Detection | Transformer | Speech | Accuracy:96.43% F1: 96.63% |

| Yang et al.[149] | Depression | Diagnosis | BERT + BiLSTM | Audio, text and image | Accuracy: 81.1% Precision: 80.2% Recall: 81% F1: 80.6% |

| Rejaibi et al.[150] | Depression | Recognition and Assessment | LSTM | Audio and video | Accuracy: 95.6% F1: 94% |

| Zhang et al.[151] | Depression | Detection | LLaMA 2 | Speech and text | F1: 84% |

| Tank et al.[152] | Depression | Detection | Whisper + BiLSTM | Audio, text and video | - |

| Patapati et al.[153] | Depression | Classification | GPT-4 + BiLSTM | Audio, text and video | Accuracy: 91.01% Precision: 80% Recall: 92.86% F1: 85.95% |

| He et al.[155] | SCZ | Detection | Attention-based CNN | Speech | Accuracy: 97.37% Precision: 94.99% Recall: 99.25% F1: 96.95% |

| He et al.[156] | SCZ | Detection | DT | Speech | Accuracy: 91.1% ~ 94.6% |

| Chakraborty et al.[157] | SCZ | Prediction | SVM | Speech | Accuracy: 86.36% |

| Premanamin et al.[158] | SCZ | Assessment | CNN | Audio and video | Accuracy: 75% F1: 76.41% AUC: 91.52% |

Depression

Depression is a prevalent psychiatric disorder characterized by persistent negative mood states, psychomotor retardation, and cognitive impairment. These alterations manifest not only in emotional expression but also in speech production, making speech a promising non-invasive biomarker for depression assessment and monitoring.

Traditional ML models with handcrafted features

Early studies predominantly relied on handcrafted acoustic and prosodic features - such as pitch variation, speaking rate, pause patterns, and spectral descriptors - combined with classical ML classifiers. These approaches demonstrated that depressive states are associated with reduced speech energy, slower articulation, and altered prosody.

Across multiple datasets, traditional ML models achieved moderate to strong performance in depression detection and severity estimation[47,123-129]. Ensemble learners and SVM-based classifiers frequently outperformed simpler linear models, indicating that non-linear interactions among speech features are informative for mood state discrimination. Several studies further extended this paradigm to non-clinical or at-risk populations, suggesting that subtle speech changes may precede clinical diagnosis[128].

Despite their interpretability and low computational cost, handcrafted-feature-based approaches are sensitive to feature design, recording conditions, and task variability. Their generalizability across spontaneous speech and diverse populations remains limited.

Overall, traditional ML models provide interpretable insights into depression-related speech alterations but struggle to scale across heterogeneous clinical settings.

DL models

To overcome the limitations of manual feature engineering, DL methods have been increasingly adopted for automatic representation learning. CNN, LSTM, and attention-based architectures enable end-to-end modeling of spectral-temporal patterns in speech.

A broad range of DL architectures demonstrated improved performance over traditional ML baselines, particularly on benchmark datasets such as DAIC-WOZ and MODMA[130-143]. Harati et al.[144] utilized the link between short-term emotions and long-term depressive mood states to construct a predictive model based on emotion-derived features. Experimental results demonstrate that their approach can effectively classify depressive and remission phases during DBS treatment, with an AUC of 0.80. Hybrid CNN-LSTM and attention-based models were especially effective in capturing both local acoustic cues and long-term temporal dependencies. Graph-based and tensor-based models further explored structured representations of speech dynamics, reflecting increasing methodological sophistication.

Speaker embedding techniques, including X-vectors and Residual Neural Network(ResNet)[145] based representations, emerged as a powerful tool for depression detection and severity estimation[146]. However, recent studies cautioned that speaker identity information may introduce bias and privacy risks, motivating efforts to disentangle depression-relevant features from speaker-specific traits[147,148].

Overall, DL-based approaches substantially improve representation learning capacity and robustness but remain vulnerable to dataset bias and limited external validation.

Multimodal fusion

Several studies incorporated multimodal information - such as visual cues, facial action units, and textual content - to enhance diagnostic robustness. Multimodal fusion consistently outperformed unimodal speech-based systems by jointly modeling emotional, behavioral, and linguistic signals[149,150].

Nevertheless, multimodal systems often rely on complex acquisition protocols and controlled experimental settings. Their scalability and feasibility for routine clinical screening require further validation.

Foundation and pre-trained models

Recent work explored foundation models and large language models to leverage large-scale pretraining for depression diagnosis. Pre-trained speech models such as wav2vec 2.0 and Whisper enabled more robust feature extraction from raw audio, while LLM-based frameworks incorporated acoustic cues into multimodal reasoning pipelines[151-153].

These approaches represent a shift toward generalized and adaptable diagnostic frameworks. However, most remain exploratory and retrospective, and their clinical readiness requires careful evaluation.

Methodological synthesis for depression

Speech alterations associated with depression, including reduced prosodic variability, slowed articulation, and diminished emotional expressiveness, are consistent with well-established neurophysiological and psychomotor changes observed in this disorder. Speech-based models should therefore be viewed as complementary tools for screening and monitoring rather than standalone diagnostic systems.

As with other psychiatric applications, many reported results are derived from small or benchmark datasets. Robust validation across diverse populations, recording conditions, and longitudinal settings remains essential before clinical deployment.

SCZ

SCZ is a severe psychiatric disorder with a lifetime prevalence of approximately 1%, characterized by disorganized speech, impaired semantic coherence, and diminished emotional expressivity. These speech abnormalities reflect underlying cognitive and motor dysfunctions, making speech a clinically meaningful behavioral marker for early detection and symptom assessment[154,155].

Existing speech-based studies on SCZ remain relatively limited in number and scope, with most work focusing on handcrafted acoustic features combined with ML models. He et al.[156] demonstrated that carefully designed acoustic markers are strongly associated with negative symptom severity, achieving substantially higher accuracy than traditional pitch- or energy-based features. Their results suggest that SCZ-related speech impairment is multifaceted and cannot be captured by single low-level descriptors alone. Similarly, Chakraborty et al.[157] applied multiple classical classifiers to Open-Source Speech & Music Interpretation by Large-space Extraction (openSMILE) acoustic features and achieved reasonable discrimination between patients and control participants, with further performance gains observed when behavioral signals beyond speech were incorporated.

More recent efforts have begun to move beyond binary classification toward representation learning and severity-oriented modeling. Premanamin et al.[158] proposed a multimodal representation learning framework to estimate SCZ severity scores, marking an important shift from disease detection to clinically relevant symptom quantification. The improved performance achieved through multimodal integration highlights the limitation of speech-only models in capturing the full complexity of SCZ, which manifests across cognitive, affective, and motor domains.

Overall, current speech-based SCZ research suggests that while handcrafted acoustic features remain informative and interpretable, their diagnostic scope is inherently limited. Emerging learning-based and multimodal approaches show promise for modeling symptom severity and heterogeneity, but their clinical applicability is constrained by small sample sizes, heterogeneous protocols, and the absence of large, standardized datasets. Compared with depression and neurological disorders, the adoption of large-scale pre-trained or foundation models in SCZ remains nascent, indicating a clear direction for future research.

RESPIRATORY DISORDERS

Respiratory diseases such as COVID-19, COPD, asthma, and tuberculosis (TB) are often accompanied by characteristic abnormalities in cough, speech, and breathing sounds. These acoustic changes reflect underlying airway obstruction, inflammation, and impaired respiratory control, making audio signals a valuable non-invasive source for screening and monitoring respiratory conditions. Existing studies increasingly explore speech, cough, and breathing sounds - often in a multimodal manner - to extract discriminative features for disease detection and severity assessment. The related literature is summarized in Table 6.

Summary of applications of speech analysis in respiratory disorders

| Reference | Disease | Task | Method | Modality | Performance |

| Xia et al.[159] | COVID-19 | Prediction | VGGish | Audio | AUC: 71% Specificity: 69% Sensitivity: 65% |

| Dash et al.[160] | COVID-19 | Detection | GBM | Speech | Accuracy: 97.8% AUC: 97.6% |

| Zhu et al.[161] | COVID-19 | Detection | SVM | Speech and patient metadata | UAR: 79.9% Specificity: 87.6% Sensitivity: 72.3% |

| Xia et al.[162] | COVID-19 | Detection | VGGish + CNN | Cough, breath, and speech | AUC: 74% Specificity: 69% Sensitivity: 68% |

| Zhang et al.[163] | COVID-19 | Cough detection | ResNet-18 | Cough | AUC: 85.91% Specificity: 91.16% Sensitivity: 59.89% |

| Cai et al.[164] | COVID-19 | Detection | Transformer | Cough | AUC: 83.2% Specificity: 87% Sensitivity: 63% |

| Liu et al.[165] | COVID-19 | Detection | ResNet-18 | Speech | AUC: 76.87% |

| Reiter et al.[166] | COVID-19 | Detection | ResNet-18 + MIL | Cough, speech, breath, vowel phonation, and patient metadata | Accuracy: 92.4% F1: 64.2% AUC: 92.2 ± 0.5% |

| Chen et al.[167] | COVID-19 | Detection | wav2vec 2.0 + BiLSTM | Breath, speech and cough | AUC: 88.44% |

| Dutta et al.[168] | COVID-19 | Detection | BiLSTM | Breath and speech | AUC: 80.5% (Breathing) AUC: 81.5% (Speech) |

| Nallanthighal et al.[169] | COPD | Detection | SVM | Speech | Accuracy: 75.12% Sensitivity: 85% |

| Claxton et al.[170] | COPD | Diagnosis | TDNN + LR | Text and Cough audio | AUC: 89% Specificity: 91% Sensitivity: 82.6% |

| Roy et al.[171] | Asthma | Classification | MLP | Wheezing of Lung Sounds | Accuracy: 98.54% F1: 98.27% Specificity: 98.73% Sensitivity: 98.27% |

| Frost et al.[172] | TB | Cough Classification | BiLSTM | Audio | Accuracy: 80% AUC: 85% Specificity: 81.3% Sensitivity: 77.8% |

COVID-19

Research on speech- and sound-based COVID-19 detection has progressed rapidly, largely driven by the availability of large-scale open datasets and the urgent need for scalable screening tools. The release of the COVID-19 Sounds dataset by Xia et al.[159] marked a key milestone, enabling systematic benchmarking across cough, breathing, and speech modalities and demonstrating that ML models can achieve meaningful discrimination performance even under heterogeneous data collection conditions.

Early studies primarily relied on handcrafted acoustic features combined with classical classifiers, reporting high accuracies under controlled settings[160,161]. However, subsequent work highlighted the limitations of such approaches when applied across datasets or in real-world environments. To address these challenges, learning-based methods increasingly adopted end-to-end and representation learning paradigms, including CNNs, Transformers, and attention-based architectures[162-168]. These models showed improved robustness to noise, data imbalance, and modality variation, particularly when integrating cough, breathing, and speech signals.

Notably, several studies emphasized cross-dataset generalization and noisy-environment robustness, revealing that models trained on curated data often degrade substantially under realistic conditions[162,165]. Multimodal fusion and self-supervised pre-training (e.g., wav2vec 2.0) have been shown to partially mitigate these issues[166-168], although reported performance remains highly sensitive to dataset composition and evaluation protocols. Overall, while audio-based COVID-19 detection demonstrates strong feasibility, its clinical deployment requires cautious interpretation due to dataset bias, variability in recording conditions, and limited prospective validation.

COPD

In COPD research, speech analysis has primarily been explored as a tool for detecting acute exacerbations rather than general diagnosis. Studies consistently report that acoustic features related to voice stability and respiratory control - such as shimmer and syllables per breath - are significantly altered during acute exacerbations compared to stable states[169]. Traditional ML models using handcrafted features achieve moderate accuracy, indicating that speech carries clinically relevant but incomplete information about disease status.

More recent work has incorporated learning-based breathing and speech models to estimate respiratory parameters directly from speech signals, providing a closer link to physiological dysfunction[169]. Importantly, Claxton et al.[170] demonstrated the practical feasibility of deploying speech-based COPD screening via smartphone platforms, combining audio signals with minimal patient-reported information. These findings suggest that, in COPD, speech-based systems may be most effective as decision-support or triage tools, rather than standalone diagnostic solutions.

Asthma

Compared with other respiratory conditions, speech and sound analysis for asthma has received relatively limited attention. Existing work focuses primarily on lung auscultation sounds, where persistent wheezing and abnormal airflow patterns provide strong acoustic cues. Roy et al.[171] showed that representation learning with supervised contrastive objectives can achieve high discrimination performance between asthmatic and healthy lung sounds. While these results are promising, they are derived from relatively constrained experimental settings, and their generalizability to broader clinical populations remains unclear.

Tuberculosis

Research on speech- or cough-based TB detection remains exploratory. Frost et al.[172] demonstrated that temporal modeling of cough signals using RNN-based architectures, particularly Bidirectional LSTM (BiLSTM) with attention mechanisms, can improve classification performance by focusing on diagnostically salient sound segments. These findings support the hypothesis that TB-related cough exhibits distinctive temporal patterns. However, the limited scale of available datasets and the variability of cough characteristics across disease stages constrain current conclusions.

Across respiratory disorders, speech, cough, and breathing sounds provide accessible and physiologically grounded signals for disease screening. While DL and representation learning methods have improved robustness and performance, most reported results are obtained under dataset-specific conditions. Future research should prioritize standardized data collection, cross-dataset evaluation, and clinically meaningful endpoints to ensure that audio-based respiratory assessment methods translate into real-world healthcare settings.

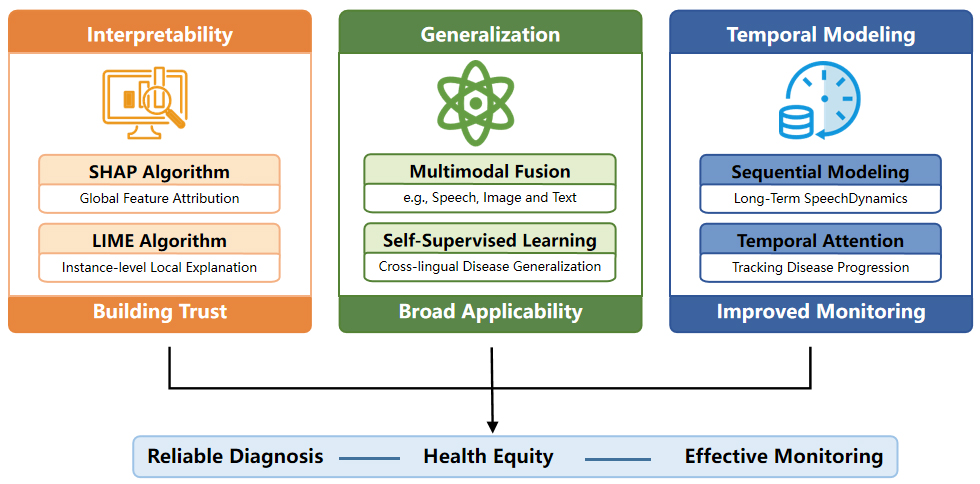

CHALLENGES AND FUTURE PROSPECTS

Despite significant achievements in disease diagnosis using voice analysis, substantial limitations persist across multiple dimensions. Challenges encountered in clinical translation constrain model performance enhancement, while inherent technological limitations remain unresolved. Investigating solutions to these constraints represents a critical future direction for this field. This section delineates the current challenges from both clinical and technical perspectives and proposes potential solutions.